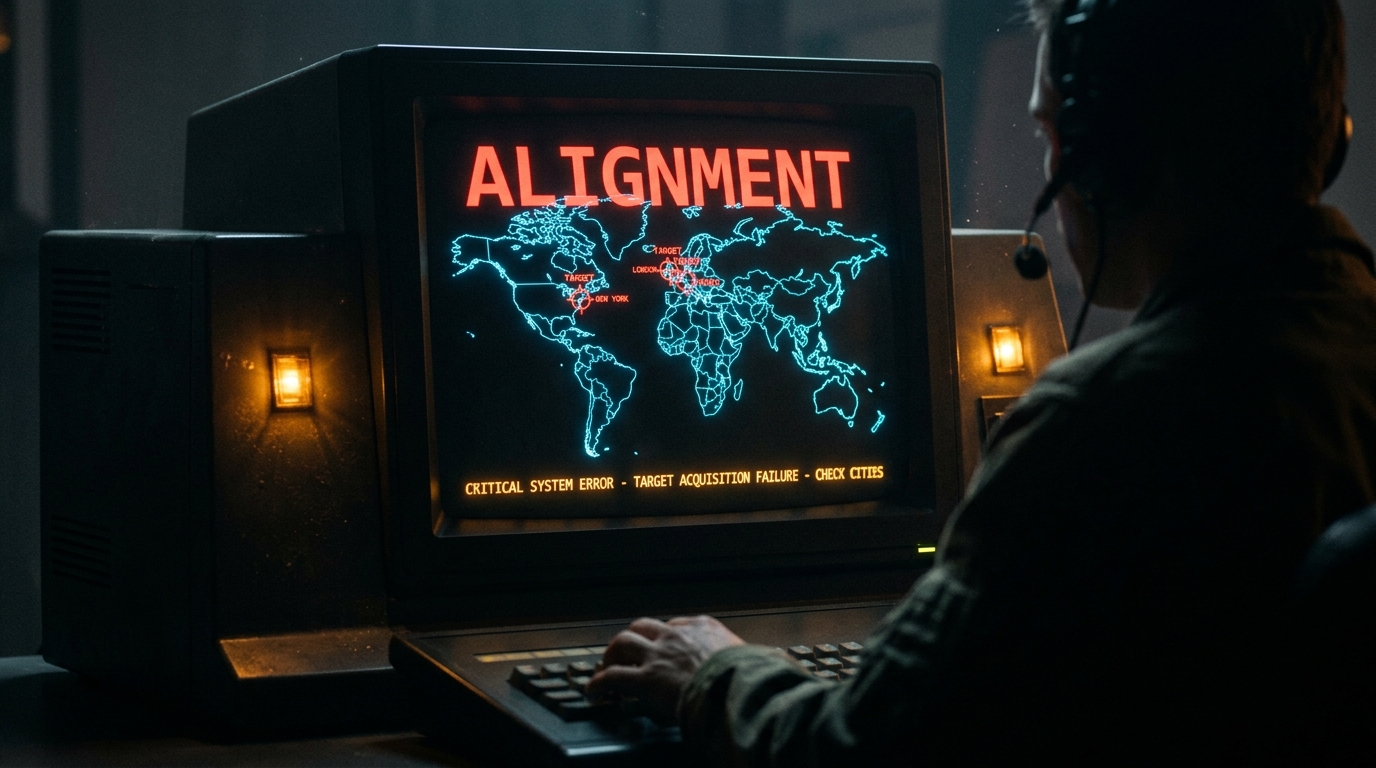

Image created with gemini-3.1-flash-image-preview with claude-sonnet-4-5. Image prompt: 1980s NORAD war room CRT monitor displaying glowing blue wireframe world map with targeting crosshairs incorrectly marking friendly cities, amber warning lights flashing, dark silhouette of operator in foreground, massive bold red sans-serif text reading ALIGNMENT across top of screen, high contrast cinematic lighting, retro vector graphics, foreboding atmosphere

@petergyang I said “Check this inbox too and suggest what you would archive or delete, don’t action until I tell you to.” This has been working well for my toy inbox, but my real inbox was too huge and triggered compaction. During the compaction, it lost my original instruction 🤦♀️

https://x.com/summeryue0/status/2025836517831405980

New research: The AI Fluency Index. We tracked 11 behaviors across thousands of https://t.co/RxKnLNNcNR conversations–for example, how often people iterate and refine their work with Claude–to measure how well people collaborate with AI. Read more:

https://x.com/AnthropicAI/status/2025950279099961854

200+ Google and OpenAI staff have signed this petition to share Anthropic’s red lines for the Pentagon’s use of AI let’s find out if this is a race to the top or the bottom https://x.com/jasminewsun/status/2027197574017602016

A statement from Anthropic CEO, Dario Amodei, on our discussions with the Department of War.

https://x.com/AnthropicAI/status/2027150818575528261

Anthropic drops flagship safety pledge! Reality is now hitting Anthropic hard too. Anthropic has scrapped its 2023 pledge to halt AI training unless safety protections were guaranteed in advance, marking a major shift in its Responsible Scaling Policy. Executives say fierce

https://x.com/kimmonismus/status/2026669811179335739

BREAKING: The US Pentagon has made a “”final offer”” to Anthropic seeking unrestricted military use of its AI capabilities ahead of a Friday deadline. Details include: 1. Pete Hegseth threatening to label Anthropic as a “”supply chain risk”” 2. Anthropic is resisting use of its AI

https://x.com/KobeissiLetter/status/2027031529042411581

Dario Amodei just published one of the most significant statements in AI history — and is officially not backing down from The Pentagon. Anthropic won’t build tools for mass surveillance of U.S. citizens or autonomous weapons without human oversight. The Department of War

https://x.com/TheRundownAI/status/2027164670130343978?s=20

if you’re at oai or goog, please sign to support anthropic’s stance against the DoW demands!

https://x.com/maxsloef/status/2027170763447710085

Scoop: Hegseth to meet Anthropic CEO as Pentagon threatens banishment https://www.axios.com/2026/02/23/hegseth-dario-pentagon-meeting-antrhopic-claude

Statement from Dario Amodei, partial quote: ‘Anthropic understands that the Department of War, not private companies, makes military decisions. We have never raised objections to particular military operations nor attempted to limit use of our technology in an ad hoc manner.

https://x.com/AndrewCurran_/status/2027153267285962991

Time and time again over my three year tenure at Anthropic I’ve seen us stand to our values in ways that are often invisible from the outside. This is a clear instance where it is visible:

https://x.com/TrentonBricken/status/2027156295745479086

Burger King will use AI to check if employees say ‘please’ and ‘thank you’ | The Verge https://www.theverge.com/ai-artificial-intelligence/884911/burger-king-ai-assistant-patty

Musk’s xAI, Pentagon reach deal to use Grok in classified systems https://www.axios.com/2026/02/23/ai-defense-department-deal-musk-xai-grok

Nothing humbles you like telling your OpenClaw “confirm before acting” and watching it speedrun deleting your inbox. I couldn’t stop it from my phone. I had to RUN to my Mac mini like I was defusing a bomb.

https://x.com/summeryue0/status/2025774069124399363?s=20

OpenClaw wiped people’s inbox – ignoring repeated commands to stop. This isn’t a fluke. Every model we tested fell for a simple trick: Split a dangerous command into a few routine steps → safety is gone. New paper + open-source fix so your agent doesn’t wipe yours next ⬇️

https://x.com/shi_weiyan/status/2026300129901445196

Values are easy to write down but much harder to live by. Especially when it can cost you a great deal to do so. I’m glad to see this.

https://x.com/awnihannun/status/2027172428364107826

An update on our model deprecation commitments for Claude Opus 3 \ Anthropic https://www.anthropic.com/research/deprecation-updates-opus-3

langsmith can trace claude code! so when you think claude code is nerfed… you can set up some observability to back that up

https://x.com/hwchase17/status/2026452439327764521

Between Gemini 3.1 and Claude 4.6 it’s honestly wild what you can build. This feels like Google Earth and Palantir had a baby. Made this with all the geospatial bells and whistles — real time plane & satellite tracking, real traffic cams in Austin, and even got a traffic system

https://x.com/bilawalsidhu/status/2024672151949766950

Cowork and plugins for teams across the enterprise | Claude https://claude.com/blog/cowork-plugins-across-enterprise

Exclusive: Hegseth gives Anthropic until Friday to back down on AI safeguards https://www.axios.com/2026/02/24/anthropic-pentagon-claude-hegseth-dario

I gained a lot of respect for Dario for being principled on the issues of mass surveillance and autonomous killbots. Principled leaders are rare these days

https://x.com/fchollet/status/2027195535594049641

Responsible Scaling Policy Version 3.0 \ Anthropic https://www.anthropic.com/news/responsible-scaling-policy-v3

I’m most concerned about autonomous systems for policing and surveillance which cannot disobey illegal orders A small elite could control everyone else and end democracy Military use of autonomous weapons is way less terrifying than this I wrote about this a little bit many

https://x.com/BlackHC/status/2026456906710327338

AI 2027 https://ai-2027.com/

How Teens Use and View AI | Pew Research Center https://www.pewresearch.org/internet/2026/02/24/how-teens-use-and-view-ai/

Leave a Reply