Last week, Ethan Mollick from the Wharton School of Business, asked Claude Code to create a printed edition of the full parameters of GPT-1. Claude Code built a set of 80 700-page books containing the 117 million floating point numbers that comprise the weights for GPT-1, along with a guide for how to do inference with pen and paper. Claude then coded a functional online store that sold, printed, and shipped a limited run of 20 copies of each book… at cost. The entire product and online store itself was researched, designed, and constructed by Claude.

https://weights-press.netlify.app

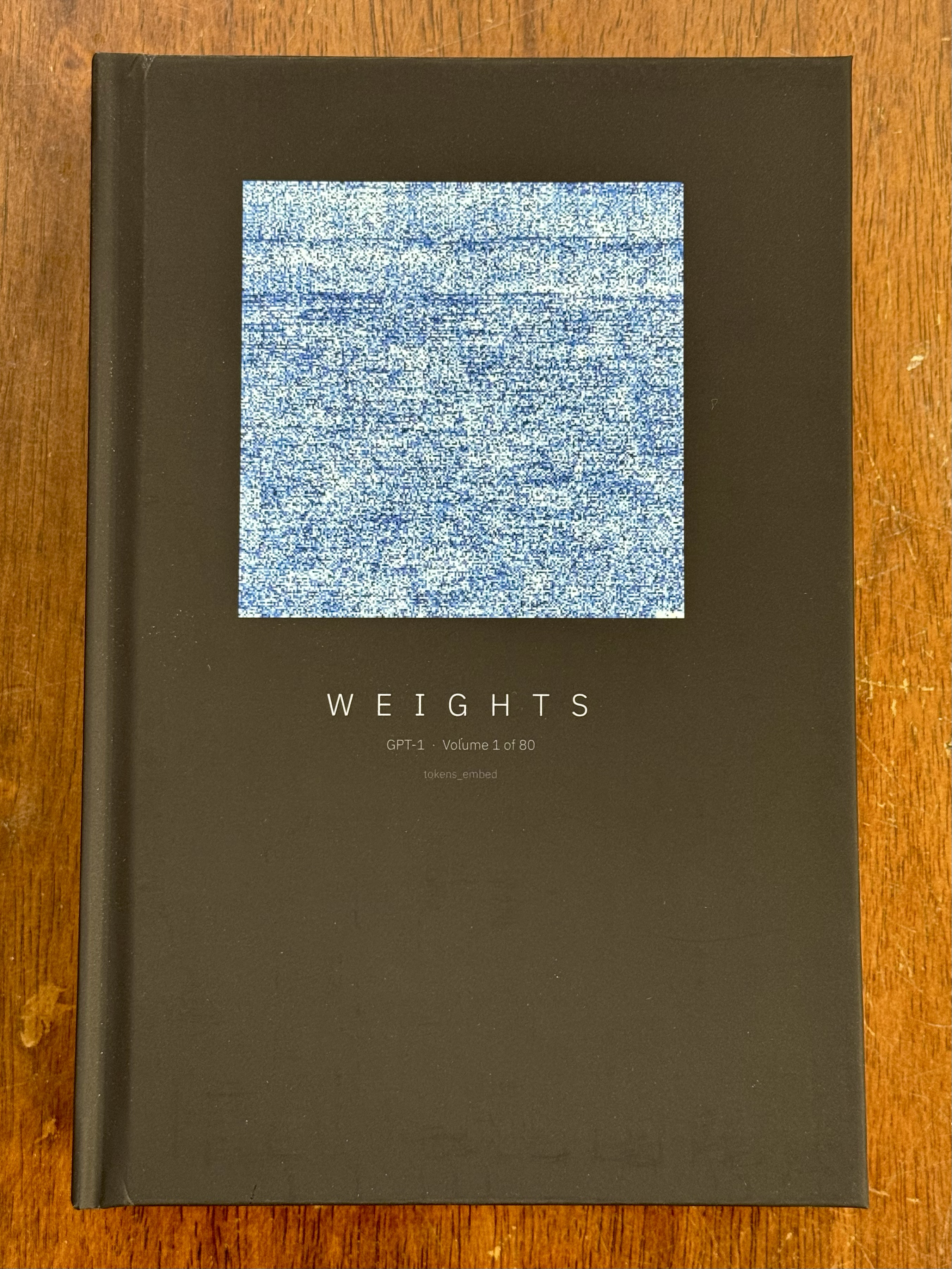

I’m now the proud owner of a sold-out first-edition Volume 1 of 80… 730-page book titled:

Volume 1 of 80

tokens_embed

1,460,000 parameters

730 pages

Parameters 0–1,459,999

“These books contain every parameter of OpenAI’s GPT-1, a neural network trained in 2018. 117 million floating point numbers. This is everything the model knows.

Volume 1 includes a companion guide — a complete walkthrough of how to perform a single inference by hand, using nothing but these pages, a pencil, and patience.

Each cover visualizes the actual weight values printed inside that volume. Values near zero appear as deep indigo; larger magnitudes shift toward white. Every cover is unique — a direct rendering of what the network learned.”

Leave a Reply