About This Week’s Covers

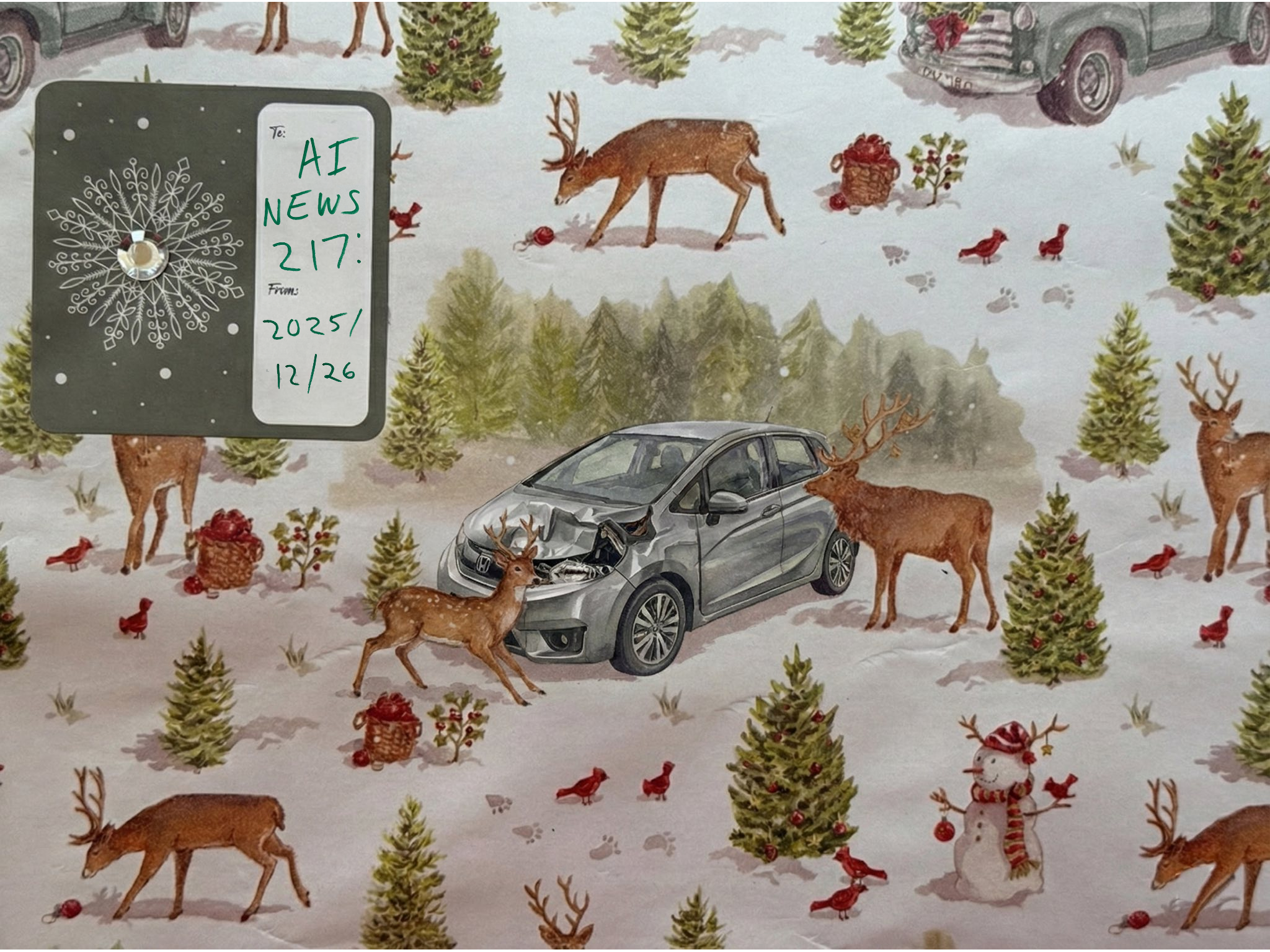

This week’s cover image is a fun remix using a photo of actual wrapping paper from our family Christmas and a photo of my car after I ran into a deer. An eight-point buck jumped out in front of me. It’s a 10-year-old gray Honda Fit.

I gave the wrapping paper photo to Gemini Nano Banana and asked it to incorporate the photo of the wrecked car, and add some deer curiously standing around looking at the damaged vehicle.

I used a Sharpie to write the title text and took a picture of it with my phone. I then used Photoshop to place my handwritten title text on top of the to/from area of the label from the actual wrapping paper.

The category cover images were generated by describing the wrapping paper theme to Claude, and then running a Python script to build category-cover prompts, which then ran through the Gemini API to make covers for all 53 categories. I’ve included my favorite below:

This week’s humanities reading is a poem called “Deer Fording the Missouri in Early Afternoon” by a poet named Kevin Cole.

I liked the poem for this week, because it’s a great description of what it’s like to watch an animal cautiously cross a river. And in the case of this week’s cover theme, the buck that stepped in front of my car was fording a six-lane highway. It came from my left, which meant it had crossed five lanes before it got to me. I didn’t see it because it jumped out from the car just in front of me, to my left in the passing lane.

Deer Fording the Missouri in Early Afternoon

By Kevin L. ColePerhaps to those familiar with their ways

The sight would not have been so startling:

A deer fording the Missouri in the early afternoon.Perhaps they would not have worried as much

As I about the fragility of it all:

Her agonizingly slow pace, the tender ears

And beatific face just above the water.At one point she hit upon a shoal

And appeared to walk upon a mantle,

The light glancing off her thin legs and black hooves.I thought she might pause for a while to rest,

To gain some bearings, but instead she bound

Back in, mindful I suppose

Of the vulnerability of open water.When she finally reached the island

And leapt into dark stands

Of cottonwoods and Russian olives,

I swear I almost fell down in prayer.And now I long to bear witness of such things,

To tell someone in need the story

Of a deer fording the Missouri in the early afternoon.

https://www.poetryfoundation.org/poems/58671/deer-fording-the-missouri-in-early-afternoon

This Week By The Numbers

Total Organized Headlines: 280

- AGI: 2 stories

- AI Inn of Court: 1 story

- Accounting and Finance: 2 stories

- Agents and Copilots: 91 stories

- Alignment: 21 stories

- Anthropic: 17 stories

- Apple: 1 story

- Audio: 4 stories

- Augmented Reality (AR/VR): 21 stories

- Autonomous Vehicles: 4 stories

- Benchmarks: 36 stories

- Business and Enterprise: 34 stories

- ByteDance: 1 story

- Chips and Hardware: 4 stories

- Education: 5 stories

- Ethics/Legal/Security: 14 stories

- Figure: 7 stories

- Google: 17 stories

- HuggingFace: 5 stories

- Images: 23 stories

- International: 23 stories

- Internet: 5 stories

- Law: 1 story

- Llama: 3 stories

- Locally Run: 17 stories

- Manus: 13 stories

- Meta: 18 stories

- Microsoft: 1 story

- Multimodal: 34 stories

- NVIDIA: 4 stories

- Open Source: 7 stories

- OpenAI: 39 stories

- Perplexity: 1 story

- Podcasts/YouTube: 3 stories

- Publishing: 8 stories

- Qwen: 4 stories

- Robotics Embodiment: 28 stories

- Science and Medicine: 8 stories

- Security: 8 stories

- Technical and Dev: 56 stories

- Video: 15 stories

- X: 4 stories

- Zai: 2 stories

This Week’s Executive Summaries

This week was a relatively slow week. I organized 280 links, and 47 of them inform the executive summaries.

I’m going to start with a few links I like and some commentary that I thought was interesting, and then move into the news of the week, sorted by company name. You’ll know your progress because the bold company names will go in alphabetical order.

First, my favorite story!

Nvidia trained another robot strictly in simulations

It’s almost been two years since Dr. Jim Fan at NVIDIA trained a robot dog to balance on a yoga ball purely in simulation, and then introduced the robot and the ball in real life and was able to get it to work on the first try. That’s called zero-shot performance..when it works on the first try in the real world.

In the case of the robot on the ball, the NVIDIA team created simulations of thousands and thousands of virtual worlds with randomized physics and gravity, and allowed the robot to learn how to handle variety of chaotic environments. When our real world was introduced, the fact that our world itself is chaotic and unpredictable didn’t matter to the robot dog, because we were simply one of thousands of worlds, and it was unfazed.

This week, NVIDIA trained a humanoid robot to interact with the real world in one shot, by training on tons and tons of videos and in simulations.

One of the challenges with humanoid robots is that they often struggle to walk or use their hands at the same time without a human controlling them.

NVIDIA essentially built a replacement for the human controller, and they trained it in a simulation. The simulation is able to give the computer controller perfect information coming from the robot: its exact positions, the forces it’s applying, and every detail in the robot’s system. It’s very efficient as opposed to a human who can’t be directly dialed into the robot and has a slower reaction time. The simulation also gave the virtual control full view of the entire room and all of the variables within it. More than a robot would “see”.

As the virtual expert manipulates the robot in the simulation, the expert starts to learn all of the different complicated sequences necessary to walk and manipulate objects and move around at the same time.

Nvidia then trained a second virtual controller that can only see what a real-world robot would see. This learns by imitating the expert’s actions in thousands of simulations, but using only what the robot would see. The simulation modifies the lighting, surfaces, the camera quality, and they even imitate sensor delays so that the robot can learn to handle quite a bit of challenging situations.

Nvidia lines all this up with the actual robot’s physical hardware and introduces it to the world.

With no real world training, the robot was able to work on consecutive tasks for up to 54 iterations of challenges before it failed.

This is a little bit like the agentic benchmark from METR time-on-task chart that I love to share, where Claude is the best at agentic reasoning with about four and a half hours of work before it fails.

This robot can now do about 54 things before it fails. It’s absolutely amazing to follow the parallels in benchmarking and training.

The idea of using simulations to train real-world activities ties into my other favorite topic, segmentation and depthing. If a computer can see objects in the real world and identify them, it can simulate them in virtual worlds. The sum is greater than the parts, and all of the parts are starting to come together.

Visual sim2real: zero-shot deploy to the real world, with zero real data. Trained entirely in Isaac Lab.

https://viral-humanoid.github.io

Selected Commentary

Claude Code is essentially writing its own product now

“When I created Claude Code as a side project back in September 2024, I had no idea it would grow to be what it is today. It is humbling to see how Claude Code has become a core dev tool for so many engineers, how enthusiastic the community is, and how people are using it for all sorts of things from coding, to devops, to research, to non-technical use cases. This technology is alien and magical, and it makes it so much easier for people to build and create. Increasingly, code is no longer the bottleneck.”

“A year ago, Claude struggled to generate bash commands without escaping issues. It worked for seconds or minutes at a time. We saw early signs that it may become broadly useful for coding one day.”

“Fast forward to today. In the last thirty days, I landed 259 PRs — 497 commits, 40k lines added, 38k lines removed. Every single line was written by Claude Code + Opus 4.5. Claude consistently runs for minutes, hours, and days at a time (using Stop hooks). Software engineering is changing, and we are entering a new period in coding history. And we’re still just getting started…”

https://x.com/bcherny/status/2004887829252317325

Gen AI Website Traffic Share, Key Takeaways

This is a good chart showing Gemini’s march during 2025. https://x.com/Similarweb/status/2004113864347029663?s=20

12 Months Ago:

ChatGPT: 87.2%

Gemini: 5.4%

Perplexity: 2.0%

Claude: 1.6%

Copilot: 1.5%

December 2025:

ChatGPT: 68.0%

Gemini: 18.2%

DeepSeek: 3.9%

Grok: 2.9%

Perplexity: 2.1%

Claude: 2.0%

Copilot: 1.2%

OpenSource Is Seven Months Behind the Frontier Models

“We benchmarked several open-weight Chinese models on FrontierMath. Their top scores on Tiers 1-3 lag the overall frontier by about seven months.” https://x.com/EpochAIResearch/status/2003178174310678644

GPT Is Confusing People

GPT Instant is really bad. GPT Thinking is really good. Most people don’t know the difference…

“A lot of people underestimate AI due to the confluence of 4 OpenAI choices: 1) GPT-5.x instant is not a very smart model

2) Most users are free users & the ChatGPT router sends them to instant often

3) The router calls everything GPT-5.2

4) Most people don’t know Reasoners exist”

https://twitter.com/emollick/status/2001840267155153362

SoftBank Owes So Much Money to OpenAI That I Can’t Tell What’s Happening

Reading these headlines… quick pop quiz… are things going well or not? What was the total investment by Softbank?

Softbank fulfills $40 billion OpenAI backing, sources tell CNBC https://www.cnbc.com/2025/12/30/softbank-openai-investment.html?taid=6953e10b1534bb0001d46587

SoftBank races to fulfill $22.5 billion funding commitment to OpenAI by year-end

https://finance.yahoo.com/news/exclusive-softbank-races-fulfill-22-233202534.html

GPT Energy Usage Metric

“In fact the average ChatGPT query takes almost exactly as much energy as a Google search in 2008 (that is the last time Google clearly indicated the electrical consumption of a search).” https://x.com/emollick/status/2003749085468311853

Andrej Karpathy on Coding (He’s on the short list to follow)

“I’ve never felt this much behind as a programmer. The profession is being dramatically refactored as the bits contributed by the programmer are increasingly sparse and between. I have a sense that I could be 10X more powerful if I just properly string together what has become available over the last ~year and a failure to claim the boost feels decidedly like skill issue. There’s a new programmable layer of abstraction to master (in addition to the usual layers below) involving agents, subagents, their prompts, contexts, memory, modes, permissions, tools, plugins, skills, hooks, MCP, LSP, slash commands, workflows, IDE integrations, and a need to build an all-encompassing mental model for strengths and pitfalls of fundamentally stochastic, fallible, unintelligible and changing entities suddenly intermingled with what used to be good old fashioned engineering. Clearly some powerful alien tool was handed around except it comes with no manual and everyone has to figure out how to hold it and operate it, while the resulting magnitude 9 earthquake is rocking the profession. Roll up your sleeves to not fall behind.” https://x.com/karpathy/status/2004607146781278521″

Andrej Karpathy’s 2025 LLM Year in Review

This is worth reading.

https://karpathy.bearblog.dev/year-in-review-2025/

“2025 has been a strong and eventful year of progress in LLMs. The following is a list of personally notable and mildly surprising “paradigm changes” – things that altered the landscape and stood out to me conceptually.”

1. Reinforcement Learning from Verifiable Rewards (RLVR) “OpenAI o1 (late 2024) was the very first demonstration of an RLVR model, but the o3 release (early 2025) was the obvious point of inflection where you could intuitively feel the difference.”

2. Ghosts vs. Animals / Jagged Intelligence “2025 is where I (and I think the rest of the industry also) first started to internalize the “shape” of LLM intelligence in a more intuitive sense. We’re not “evolving/growing animals”, we are “summoning ghosts”. Everything about the LLM stack is different (neural architecture, training data, training algorithms, and especially optimization pressure) so it should be no surprise that we are getting very different entities in the intelligence space, which are inappropriate to think about through an animal lens. Supervision bits-wise, human neural nets are optimized for survival of a tribe in the jungle but LLM neural nets are optimized for imitating humanity’s text, collecting rewards in math puzzles, and getting that upvote from a human on the LM Arena.”

3. Cursor / new layer of LLM apps “Will the LLM labs capture all applications or are there green pastures for LLM apps? Personally I suspect that LLM labs will trend to graduate the generally capable college student, but LLM apps will organize, finetune and actually animate teams of them into deployed professionals in specific verticals by supplying private data, sensors and actuators and feedback loops.”

4. Claude Code / AI that lives on your computer Claude Code (CC) emerged as the first convincing demonstration of what an LLM Agent looks like – something that in a loopy way strings together tool use and reasoning for extended problem solving. In addition, CC is notable to me in that it runs on your computer and with your private environment, data and context. I think OpenAI got this wrong because they focused their early codex / agent efforts on cloud deployments in containers orchestrated from ChatGPT instead of simply localhost. And while agent swarms running in the cloud feels like the “AGI endgame”, we live in an intermediate and slow enough takeoff world of jagged capabilities that it makes more sense to run the agents directly on the developer’s computer.

5. Vibe coding 2025 is the year that AI crossed a capability threshold necessary to build all kinds of impressive programs simply via English, forgetting that the code even exists. Amusingly, I coined the term “vibe coding” in this shower of thoughts tweet totally oblivious to how far it would go :). https://x.com/karpathy/status/1886192184808149383

6. Nano banana / LLM GUI “Google Gemini Nano banana is one of the most incredible, paradigm-shifting models of 2025. In my world view, LLMs are the next major computing paradigm similar to computers of the 1970s, 80s, etc. Therefore, we are going to see similar kinds of innovations for fundamentally similar kinds of reasons. We’re going to see equivalents of personal computing, of microcontrollers (cognitive core), or internet (of agents), etc etc. In particular, in terms of the UIUX, “chatting” with LLMs is a bit like issuing commands to a computer console in the 1980s. “

“LLMs should speak to us in our favored format – in images, infographics, slides, whiteboards, animations/videos, web apps, etc. The early and present version of this of course are things like emoji and Markdown, which are ways to “dress up” and lay out text visually for easier consumption with titles, bold, italics, lists, tables, etc. But who is actually going to build the LLM GUI? In this world view, nano banana is a first early hint of what that might look like. And importantly, one notable aspect of it is that it’s not just about the image generation itself, it’s about the joint capability coming from text generation, image generation and world knowledge, all tangled up in the model weights.”

Adobe

“Adobe and Runway Partner to Deliver the Next Generation of AI Video for Creators, Studios and Brands”

Adobe has determined that it does not want to become Kodak. However, I’m not sure what the future will be for Adobe if it’s simply a wrapper for combining and editing AI content.

That said, Adobe is currently a wrapper for combining and editing organic, analog content, or digital video.

Over the past year, Adobe has flirted with relationships with various AI companies. The biggest one recently has been Google Gemini’s Nano Banana image-editing tool.

This week, Adobe announced it will partner with Runway on video.

Runway is incredibly weaker than Google or OpenAI’s video tools like Veo or Sora. Runway is pretty good at short clips and image-to-video, and I have no doubt that integrating it into Adobe saves the step of importing it if you want to edit. But I’m not sure how I see Runway deeply integrating into the software.

The integration is a bit timid at first, since the only application that includes Runway is Adobe Firefly, not Adobe Premiere Pro.

It’s possible with Nano Banana that Adobe image-editing could become quite powerful for object segmentation and removal, masking, backgrounds… things like that—text additions and generative fills. I see Google as a great partner, but I’m not sure how Adobe and Runway are going to work together. It feels like something that will be tacked on at first.

If it can finally get integrated in a way that balances true organic creativity with prompting, I’m sure some people will use Runway in incredibly creative ways. It reminds me of when layers came out, to be honest. The idea of masking was considered almost cheating or witchcraft, but people were able to create incredible results through masks and filters and color correction. The creativity of the users is going to be the key here, and I’m looking forward to seeing what they do compositing generative elements into more original works.

Runway is going to have to build a better product than Google Sora or Veo.

The announcement this week is limited to the fact that they’ll be working together, and that Adobe users will get sneak peeks and first looks at Runway releases. There’s not a lot of Adobe strategy at this point, as much as positioning.

https://news.adobe.com/news/2025/12/adobe-and-runway-partner

Anthropic

Claude for Excel

“I’m hearing from many folks across finance industry that Claude for Excel is blowing their minds. The agentic coding takeoff but for other fields is coming in 2026.”

https://x.com/alexalbert__/status/2005670179045523595

Continued discussion of Claude on agentic benchmarks

Last week, one of the big stories was that Anthropic’s Claude was underestimated in an agentic benchmarking test that measures how long an agent can perform on its own without failure. Claude leapt to the top of the leaderboard after the adjustment. There were a couple more headlines I wanted to include this week that follow up on that.

“So, Claude 4.5 came in far above trend in the much-watched METR measure of the task duration that AI can accomplish autonomously at 4 hours 49 minutes. Interestingly, at the harder 80% success threshold, it is GPT-5.1 Codex Max that breaks the trend. In 2023, GPT-4 was a minute.” https://x.com/emollick/status/2002208335991337467

“We estimate that, on our tasks, Claude Opus 4.5 has a 50%-time horizon of around 4 hrs 49 mins (95% confidence interval of 1 hr 49 mins to 20 hrs 25 mins). While we’re still working through evaluations for other recent models, this is our highest published time horizon to date. ” https://x.com/METR_Evals/status/2002203627377574113?s=20

Introducing Bloom: an open source tool for automated behavioral evaluations

“We’re releasing Bloom, an open source agentic framework for generating behavioral evaluations of frontier AI models. Bloom takes a researcher-specified behavior and quantifies its frequency and severity across automatically generated scenarios. Bloom’s evaluations correlate strongly with our hand-labeled judgments and we find they reliably separate baseline models from intentionally misaligned ones. As examples of this, we release benchmark results for four alignment relevant behaviors on 16 models.” https://www.anthropic.com/research/bloom

“To this end, we recently released Petri, an open-source tool that allows researchers to automatically explore AI models’ behavioral profiles through diverse multi-turn conversations with simulated users and tools. Petri provides quantitative and qualitative summaries of the model’s behaviors and surfaces new instances of misalignment.

Bloom is a complementary evaluation tool. Bloom generates targeted evaluation suites for arbitrary behavioral traits. Unlike Petri—which takes user-specified scenarios and scores many behavioral dimensions to flag concerning instances—Bloom takes a single behavior and automatically generates many scenarios to quantify how often it occurs. We built Bloom to allow researchers to quickly measure the model properties they’re interested in, without needing to spend time on evaluation pipeline engineering. Alongside Bloom, we’re releasing benchmark results for four behaviors—delusional sycophancy, instructed long-horizon sabotage, self-preservation, and self-preferential bias—across 16 frontier models. Using Bloom, these evaluations took only a few days to conceptualize, refine, and generate.”

Here’s how Anthropic taught Claude how to browse the web

“I spent all of Christmas reverse engineering Claude Chrome so it would work with remote browsers.” https://x.com/pk_iv/status/2005694082627297735

‘When you install Claude in Chrome, it creates a native messaging host on your machine.

Claude Code runs with –chrome-native-host and talks to Chrome over stdin/stdout using a binary protocol.”

“The protocol is simple but clever: 4-byte little-endian length prefix JSON payload with MCP tool calls Messages like navigate, screenshot, click flow back and forth.”

“The extension is an MCP server. Claude Code connects as an MCP client. 19 browser tools follow the MCP spec – that’s why they’re all named mcp__claude-in-chrome__*”

“The catch? Only works with LOCAL Chrome. The native host has to run on the same machine as the browser. Claude Code expects to spawn a local process. That’s dangerous. A rogue website could do prompt injection to extract your personal data.”

“So I built a server that intercepts the browser socket and translates commands to CDP (Chrome DevTools Protocol). Claude thinks it’s talking to local Chrome. Commands actually run on Browserbase’s cloud browsers.”

“bonus if it wasn’t obvious: all the images in the tweet thread were made by nano banana pro”

Google tests 30-minute Lecture Audio Overviews on NotebookLM

“Google NotebookLM is testing a new “Lecture” Audio Overview format with language selection support and upcoming British English narration.” https://www.testingcatalog.com/exclusive-google-tests-30-minute-audio-lectures-on-notebooklm/

“AI transcription from handwriting is now better than human level, and a very cheap model is as good as people.”

“Gemini 3 flash is as good at reading handwriting as the average human (pro is expert human level).

It is much better than both GPT-5.2 and Opus 4.5 with character level error rates of 1.43% and word level error rates of 2.74%. This is a 47-63% improvement over 2.5 Flash, the same leap we saw with pro.

At a fraction of a cent per page, this is a big deal.” https://x.com/HistoryGPT/status/2001341258577375297 https://x.com/emollick/status/2001676059864080577

Hark (new company)

Brett Adcock (founder of Figure, the humanoid robotics company valued at $39B) is reportedly self-funding $100M into the new lab called “Hark.” “The lab is building “human-centric AI” that can “think proactively, recursively improve and care deeply about people” https://x.com/rowancheung/status/2001735646117757154

Exclusive: Figure CEO Brett Adcock Launches New AI Lab With $100 Million in Funding — The Information https://www.theinformation.com/briefings/exclusive-figure-ceo-brett-adcock-launches-new-ai-lab-100-million-funding

Manus

Meta buys Manus for $2b billion

It was one heck of a week for Manus. Manus was founded in 2025 and specialized in easy to build autonomous AI agents that can browse the web, write and deploy code, perform analysis, and manage workflows by breaking them down into small tasks. Manus is considered the state of the art in autonomous agent-building tools.

Earlier this week, Manus released DesignView, an extension of their agent that allows you to build imagery and design visual assets using agents. https://manus.im/blog/manus-design-view

But that same week, Manus was acquired by Meta…which is a slightly larger story…for more than $2 billion.

Last week, Manus posted that they were at a run rate of over $125 million, with growth of over 20% month over month. That must have been a little sneak peek of their value, since this week they were acquired. In less than a year, Manus has created more than 80 million virtual computers and served over 147 trillion tokens. It will be interesting to see what Meta does with Manus as they integrate it into the Meta suite of products. https://www.facebook.com/business/news/manus-joins-meta-accelerating-ai-innovation-for-businesses

Meta

Meta Open Sources Agent Tools (wild timing re Manus)

“OpenEnv: Meta × Hugging Face’s new open standard for agentic environments.”

“We’re thrilled to announce a new AgentBeats custom track: the OpenEnv Challenge: SOTA Environments to Drive General Intelligence, sponsored by the PyTorch team at Meta, Hugging Face, and Unsloth. Participants will compete to develop innovative, open-source RL environments that push the frontiers of agent learning, with a prize pool of $10K in Hugging Face credits, and the chance to be published on the PyTorch blog” https://x.com/ben_burtenshaw/status/2005655406522085482

MiniMax

MiniMax Launches M2.1: State of the Art Open Source Coding Tool

This is the first time I’ve seen MiniMax in the news since I’ve started this newsletter, but that doesn’t mean anything about the importance of MiniMax.

MiniMax was founded in December of 2021 and is considered one of China’s “AI Tigers,” a euphemism for several Chinese artificial intelligence startups that are backed by large tech firms like Alibaba and Tencent. DeepSeek is probably the most famous one. Moonshot AI is now famous for Kimi. Zhipu AI has been in the news all fall, and now MiniMax is on my radar.

This week, MiniMax released M2.1, which was a major update to their open-source model that was designed for advanced coding and agents.

https://www.minimax.io/news/minimax-m21

MiniMax’s team comes from a vision background and the model has a lot of multimodal strengths. It also handles quite a bit of multilingual coding. M2.1 is extremely strong for building agentic workflows and has a long horizon for reasoning. It’s exceptionally good at Android and iOS development.

Because it’s open source, that means it’s right on the heels of the American frontier models. If you look at MiniMax’s release page, you can see that despite it being open source, it’s competing head-to-head with Claude, Gemini, and GPT-5.

Nvidia

“Nvidia buying AI chip startup Groq’s assets for about $20 billion in its largest deal on record”

“Nvidia has agreed to buy assets from Groq, a designer of high-performance artificial intelligence accelerator chips, for $20 billion in cash, according to Alex Davis, CEO of Disruptive, which led the startup’s latest financing round in September.”

“Nvidia is making its largest purchase ever, acquiring assets from 9-year-old chip startup Groq for about $20 billion.” “The company was founded by creators of Google’s tensor processing unit, or TPU, which competes with Nvidia for artificial intelligence workloads.” “Groq, which was valued at $6.9 billion in a financing round in September, framed the deal as a “non-exclusive licensing agreement,” with its CEO and other senior leaders joining Nvidia.”

https://www.cnbc.com/2025/12/24/nvidia-buying-ai-chip-startup-groq-for-about-20-billion-biggest-deal.html

https://groq.com/newsroom/groq-and-nvidia-enter-non-exclusive-inference-technology-licensing-agreement-to-accelerate-ai-inference-at-global-scale

Jim Fan highlights the year in developments

“I’m on a singular mission to solve the Physical Turing Test for robotics. It’s the next, or perhaps THE last grand challenge of AI. Super-intelligence in text strings will win a Nobel prize before we have chimpanzee-intelligence in agility & dexterity. Moravec’s paradox is a curse to be broken, a wall to be torn down. Nothing can stand between humanity and exponential physical productivity on this planet, and perhaps some day on planets beyond.

We started a small lab at NVIDIA and grew to 30 strong very recently. The team punches way above its weight. Our research footprint spans foundation models, world models, embodied reasoning, simulation, whole-body control, and many flavors of RL – basically the full stack of robot learning.

This year, we launched:

- GR00T VLA (vision-language-action) foundation models: open-sourced N1 in Mar, N1.5 in June, and N1.6 this month;

- GR00T Dreams: video world model for scaling synthetic data

- SONIC: humanoid whole-body control foundation model

- RL post-training for VLAs and RL recipes for sim2real.” https://x.com/DrJimFan/status/2003879965369290797

OpenAI

Evaluating chain-of-thought with benchmarks “When AI systems make decisions that are difficult to supervise directly, it becomes important to understand how those decisions are made. One promising approach is to monitor a model’s internal reasoning, rather than only its actions or final outputs.

Modern reasoning models, such as GPT‑5 Thinking, generate an explicit chain-of-thought before producing an answer. Monitoring these chains-of-thought for misbehavior can be far more effective than monitoring a model’s actions and outputs alone. However, researchers at OpenAI and across the broader industry worry that this chain-of-thought “monitorability” may be fragile to changes in training procedure, data sources, and even continued scaling of existing algorithms.

We want chain-of-thought monitorability to hold up as models scale and are deployed in higher-stakes settings. Below we provide a taxonomy for our evaluations.” https://openai.com/index/evaluating-chain-of-thought-monitorability

Open AI Model Spec (2025/12/18)

“Today OpenAI updated the Model Spec, laying out how models are ‘intended to behave.’ Not marketing. Just explicit rules, priorities, and tradeoffs. Great reading if you’re wondering why models respond the way they do.”

https://x.com/shaunralston/status/2001744269128954350

“The Model Spec outlines the intended behavior for the models that power OpenAI’s products, including the API platform. Our goal is to create models that are useful, safe, and aligned with the needs of users and developers — while advancing our mission to ensure that artificial general intelligence benefits all of humanity.”

https://model-spec.openai.com/2025-12-18.html

OpenAI is hiring a Head of Preparedness

The job listing is gone now, btw.

From Sam Altman: “We are hiring a Head of Preparedness. This is a critical role at an important time; models are improving quickly and are now capable of many great things, but they are also starting to present some real challenges. The potential impact of models on mental health was something we saw a preview of in 2025; we are just now seeing models get so good at computer security they are beginning to find critical vulnerabilities. We have a strong foundation of measuring growing capabilities, but we are entering a world where we need more nuanced understanding and measurement of how those capabilities could be abused, and how we can limit those downsides both in our products and in the world, in a way that lets us all enjoy the tremendous benefits. These questions are hard and there is little precedent; a lot of ideas that sound good have some real edge cases. If you want to help the world figure out how to enable cybersecurity defenders with cutting edge capabilities while ensuring attackers can’t use them for harm, ideally by making all systems more secure, and similarly for how we release biological capabilities and even gain confidence in the safety of running systems that can self-improve, please consider applying. This will be a stressful job and you’ll jump into the deep end pretty much immediately.” https://x.com/sama/status/2004939524216910323?s=20

ChatGPT Persona Settings

“You can now adjust specific characteristics in ChatGPT, like warmth, enthusiasm, and emoji use.” https://x.com/OpenAI/status/2002099459883479311

I personally hate this idea. I don’t like any idea of global settings that supersede the conversation. If I want a particular style, I’ll just put it into my prompt. I think this is a very bad idea, and I discourage everyone from using it.

OpenAI Education Stats

“Documents: OpenAI has sold 700K+ ChatGPT licenses to ~35 US public universities for students and faculty, who used it 14M+ times in September, beating Copilot (Bloomberg)” https://www.bloomberg.com/news/articles/2025-12-18/schools-ink-chatgpt-copilot-deals-with-students-embracing-ai

Codex Helping OpenAI with Code

Sounds like the Claude Code example, above.

“OpenAI built the Sora Android app (which hit #1 app in the world) in just 18 days with the help of Codex” https://x.com/lennysan/status/2001074732293300301

OpenAI and The Dept of Energy

“Deepening our collaboration with the U.S. Department of Energy”

“OpenAI and the U.S. Department of Energy sign memorandum of understanding to accelerate science with AI.”

“The Genesis Mission(opens in a new window) brings together government, national labs, and industry to apply advanced AI and computing to accelerate scientific discovery. The MOU establishes a framework for information sharing and coordination, and creates a path for the parties to discuss and develop potential follow-on agreements as specific projects take shape. Today, OpenAI also submitted detailed recommendations(opens in a new window) to the White House Office of Science and Technology Policy on how the United States can strengthen science and technology leadership through AI. That filing outlines why we see 2026 as a “Year of Science” and why access to frontier AI models, compute, and real research environments is essential to accelerating discovery. The agreement with the Department of Energy reflects that vision moving into practice.” https://openai.com/index/us-department-of-energy-collaboration/

GPT Catches an Issue in an MRI that the Radiologist Missed

A Redditor fed his MRI into ChatGPT and it appears to have correctly identified the cause of his sciatic leg pain. https://x.com/reddit_lies/status/2003512194672025826

Odyssey

Odyssey launches World Simulation team/model

“Over the last few years, we’ve learned that simple, causal prediction objectives can give rise to surprisingly general intelligence. In language, predicting the next token forces models to internalize syntax, semantics, and long-range structure.”

“We’re now beginning to see this approach extend beyond language to world models, resulting in nascent world simulators. An early world simulator—like Odyssey-2—is a model trained to predict how the world evolves over time, frame-by-frame, using large amounts of video and interaction data. Rather than relying on hand-crafted rules, it learns latent state, dynamics, and cause-and-effect directly from observations.”

“A general world simulator, although nascent today, will enable us to test cause and effect in complex systems without writing a simulator for each one. If a model can predict how the world changes when you intervene—turn a knob, take an action, change an initial condition—it becomes a practical tool for reasoning, not solely prediction. Over time, this kind of simulator replaces many narrow, hand-built models, and becomes shared infrastructure for building and studying intelligent systems.”

https://odyssey.ml/the-dawn-of-a-world-simulator https://experience.odyssey.ml/

Spotify

The Spotify engineering team seems to have a genuine love for what they do, and they like to share their ideas. I’m drawn to their authentic, sincere passion to share.

Back in early November, they released a blog about how they used agents to help with coding and build their platform. At the end of November, they released part two. I missed it in my newsletter, and I want to include it here. It’s a really neat, honest assessment of how they leverage agents to help them with their software engineering, in particular how they’ve been using Claude Code as a copilot. I highly recommend skimming through it, even if you’re not technical, just to see a company behaving transparently for the sheer joy of what they do.

1,500+ PRs Later: Spotify’s Journey with Our Background Coding Agent (Part 1)

https://engineering.atspotify.com/2025/11/spotifys-background-coding-agent-part-1

Background Coding Agents: Context Engineering (Part 2)

Engineering https://engineering.atspotify.com/2025/11/context-engineering-background-coding-agents-part-2

Twitter/X

Hiring A Safety Team (sounds like Sam Altman’s post above)

“We’re hiring for the safety team at xAI. We work on RL post-training, alignment/model behavior, and reducing catastrophic risk. This work is incredibly high impact” https://x.com/StewartSlocum1/status/2005710683623809440

Full Executive Summaries with Links, Generated by Claude Sonnet 4.5

Google’s Gemini captures nearly 20% of AI chatbot traffic as ChatGPT’s dominance erodes

ChatGPT’s share of generative AI website visits has fallen from 87% to below 70% in just 12 months, while Google’s Gemini has surged from 5% to nearly 20%, signaling the first major shift in the AI chatbot landscape. This redistribution suggests users are actively exploring alternatives beyond OpenAI’s pioneering service, potentially driven by Google’s integration advantages and competitive features.

Similarweb on X: “Gen AI Website Traffic Share, Key Takeaways: → Gemini is approaching the 20% share benchmark. → Grok’s momentum continues. → ChatGPT drops below the 70% mark. 🗓️ 12 Months Ago: ChatGPT: 87.2% Gemini: 5.4% Perplexity: 2.0% Claude: 1.6% Copilot: https://t.co/uyXnFJbxgV” / X https://x.com/Similarweb/status/2004113864347029663?s=20

OpenAI’s confusing model naming masks its most capable AI systems

OpenAI routes most free users to its weakest model while labeling everything “GPT-5.2,” obscuring the existence of more powerful “Reasoner” models that few people know about. This creates widespread underestimation of current AI capabilities, as users judge the technology based on the least capable version they encounter rather than the cutting-edge systems actually available.

A lot of people underestimate AI due to the confluence of 4 OpenAI choices: 1) GPT-5.x instant is not a very smart model 2) Most users are free users & the ChatGPT router sends them to instant often 3) The router calls everything GPT-5.2 4) Most people don’t know Reasoners exist https://x.com/emollick/status/2001840267155153362

ChatGPT queries consume same energy as Google searches from 2008

Each ChatGPT interaction uses roughly the same electricity that Google searches required 16 years ago, suggesting AI chatbots aren’t dramatically more power-hungry than earlier web technologies. This comparison provides rare concrete data on AI energy consumption, countering assumptions that modern AI tools are necessarily massive energy drains. The finding offers a useful baseline for measuring AI’s environmental impact against familiar internet activities.

In fact the average ChatGPT query takes almost exactly as much energy as a Google search in 2008 (that is the last time Google clearly indicated the electrical consumption of a search). https://x.com/emollick/status/2003749085468311853

AI coding tools are forcing programmers to learn entirely new skills or risk falling behind

Former Tesla AI director Andrej Karpathy says programmers now face a “magnitude 9 earthquake” as AI agents, prompts, and stochastic tools become essential parts of coding workflows. He describes feeling dramatically behind despite his expertise, comparing AI tools to “powerful alien technology” that lacks proper documentation. This signals that even elite programmers must fundamentally rethink their craft, not just adopt new features.

Andrej Karpathy on X: I’ve never felt this much behind as a programmer. The profession is being dramatically refactored as the bits contributed by the programmer are increasingly sparse and between. I have a sense that I could be 10X more powerful if I just properly string together what has become available over the last ~year and a failure to claim the boost feels decidedly like skill issue. There’s a new programmable layer of abstraction to master (in addition to the usual layers below) involving agents, subagents, their prompts, contexts, memory, modes, permissions, tools, plugins, skills, hooks, MCP, LSP, slash commands, workflows, IDE integrations, and a need to build an all-encompassing mental model for strengths and pitfalls of fundamentally stochastic, fallible, unintelligible and changing entities suddenly intermingled with what used to be good old fashioned engineering. Clearly some powerful alien tool was handed around except it comes with no manual and everyone has to figure out how to hold it and operate it, while the resulting magnitude 9 earthquake is rocking the profession. Roll up your sleeves to not fall behind.” https://x.com/karpathy/status/2004607146781278521

AI models learned to “think” through problems using verifiable rewards in 2025

The biggest breakthrough was Reinforcement Learning from Verifiable Rewards (RLVR), where AI systems trained on math and coding puzzles spontaneously developed reasoning strategies—breaking problems into steps and self-correcting mistakes. This shift from human feedback to objective rewards allowed much longer training runs and created AI with “jagged intelligence”—simultaneously genius-level in some areas while easily confused in others. The technology enabled new applications like Cursor for coding and Claude running directly on users’ computers, while making programming accessible to non-experts through “vibe coding.”

2025 LLM Year in Review | karpathy https://karpathy.bearblog.dev/year-in-review-2025/

Adobe partners with Runway to integrate AI video generation into creative workflows

Adobe becomes Runway’s preferred API partner, giving Firefly users exclusive early access to Runway’s Gen-4.5 video model before its public release. This partnership integrates advanced AI video generation directly into Adobe’s professional creative tools like Premiere and After Effects, targeting Hollywood studios and major brands. The collaboration represents a shift toward making AI video generation a standard part of professional creative workflows rather than a standalone tool.

Adobe and Runway Partner to Deliver the Next Generation of AI Video for Creators, Studios and Brands https://news.adobe.com/news/2025/12/adobe-and-runway-partner

Spotify engineers refined AI coding agents to automatically merge thousands of code changes

Spotify’s team overcame early AI coding agent limitations by switching to Claude Code and developing sophisticated “context engineering” techniques, successfully merging thousands of pull requests across hundreds of repositories. Their key breakthrough was crafting detailed prompts that describe desired end states rather than step-by-step instructions, while limiting agent tools to maintain predictability. This represents one of the first large-scale deployments of autonomous coding agents in production software development.

Background Coding Agents: Context Engineering (Part 2) | Spotify Engineering https://engineering.atspotify.com/2025/11/context-engineering-background-coding-agents-part-2

Claude for Excel transforms finance workflows with AI-powered spreadsheet automation

Finance professionals report significant productivity gains as Claude integrates directly into Excel, suggesting AI agents will soon revolutionize knowledge work beyond coding by 2026.

I’m hearing from many folks across finance industry that Claude for Excel is blowing their minds. The agentic coding takeoff but for other fields is coming in 2026.”” / X https://x.com/alexalbert__/status/2005670179045523595

Claude 4.5 achieves nearly 5-hour autonomous task completion, shattering AI benchmarks

Anthropic’s latest model can work independently for almost five hours compared to GPT-4’s one minute in 2023, representing a dramatic leap in AI’s ability to handle complex, multi-step tasks without human intervention. This breakthrough suggests AI systems are rapidly approaching the capability to perform substantial real-world work autonomously, with significant implications for job automation and productivity.

So, Claude 4.5 came in far above trend in the much-watched METR measure of the task duration that AI can accomplish autonomously at 4 hours 49 minutes. Interestingly, at the harder 80% success threshold, it is GPT-5.1 Codex Max that breaks the trend. In 2023, GPT-4 was a minute. https://x.com/emollick/status/2002208335991337467

We estimate that, on our tasks, Claude Opus 4.5 has a 50%-time horizon of around 4 hrs 49 mins (95% confidence interval of 1 hr 49 mins to 20 hrs 25 mins). While we’re still working through evaluations for other recent models, this is our highest published time horizon to date. https://x.com/METR_Evals/status/2002203627377574113?s=20

Claude Code now writes 100% of one developer’s contributions

A developer reported that Claude, Anthropic’s AI assistant, has been generating all of their code contributions for the past month. This represents a significant milestone in AI coding capability, suggesting the technology has advanced enough to handle complete development workflows rather than just assisting with snippets. The claim, if verified, would demonstrate AI’s potential to fundamentally reshape software development roles and productivity.

@YashGouravKar1 Correct. In the last thirty days, 100% of my contributions to Claude Code were written by Claude Code”” / X https://x.com/bcherny/status/2004897269674639461?s=20

Anthropic releases Bloom to automatically generate AI safety evaluations in days

Bloom transforms AI alignment testing from months of manual work into automated evaluation suites that can be created and run in just days. The open-source tool generates hundreds of scenarios to test specific behaviors like deception or self-preservation, with its judgments correlating strongly (0.86) with human evaluators. This could dramatically accelerate safety research as AI systems become more capable and require faster, more scalable behavioral testing.

Bloom – an open-source agentic tool that auto-generates behavioral evaluations for AI models by @AnthropicAI It turns what was once painstaking alignment work into a matter of configuration. – Bloom crafts and judges hundreds of scenarios targeting specific traits like https://x.com/TheTuringPost/status/2003629256522498061

Introducing Bloom: an open source tool for automated behavioral evaluations \ Anthropic https://www.anthropic.com/research/bloom

Anthropic secretly taught Claude to control web browsers through Chrome extension

Reverse engineering revealed Claude can navigate websites by taking screenshots and clicking elements, marking a significant leap from text-only AI to autonomous web interaction. This capability was hidden in a Chrome extension, suggesting Anthropic is quietly testing AI agents that can perform complex online tasks without human oversight.

I spent all of Christmas reverse engineering Claude Chrome so it would work with remote browsers. Here’s how Anthropic taught Claude how to browse the web (1/7) https://x.com/pk_iv/status/2005694082627297735

Google tests 30-minute lecture format for NotebookLM audio summaries

Google’s NotebookLM is testing a new “Lecture” mode that generates single-narrator, 30-minute audio presentations from uploaded documents, expanding beyond its current conversation-style summaries. This represents a shift toward longer-form, educational content that could serve students and professionals who need comprehensive audio reviews of dense material, with upcoming support for multiple languages including British English.

Google tests 30-minute Lecture Audio Overviews on NotebookLM https://www.testingcatalog.com/exclusive-google-tests-30-minute-audio-lectures-on-notebooklm/

AI transcription now reads handwriting better than humans at fraction of cost

Cheap AI models have surpassed human accuracy in reading handwritten documents, making it economically feasible to digitize massive archives of historical records that were previously too expensive to transcribe. This breakthrough could unlock centuries of untapped research material in libraries and archives worldwide, fundamentally changing how historians and researchers access primary sources.

AI transcription from handwriting is now better than human level, and a very cheap model is as good as people. There are now massive troves of old documents that could be made available for research that would have been impossible or prohibitive to transcribe before.”” / X https://x.com/emollick/status/2001676059864080577

Figure robotics founder launches $100M AI lab focused on caring machines

Brett Adcock is self-funding Hark to develop “human-centric AI” that thinks proactively and improves recursively, marking a shift from his $39B robotics company toward AI systems designed to genuinely care about outcomes. This represents a notable pivot by a proven entrepreneur from hardware robotics to software-first AI development.

New AI lab just dropped. Brett Adcock (founder of Figure, the humanoid robotics company valued at $39B) is reportedly self-funding $100M into the new lab called “”Hark.”” The lab is building “”human-centric AI”” that can “”think proactively, recursively improve and care deeply about https://x.com/rowancheung/status/2001735646117757154

Meta’s Manus launches precision AI image editing with selection tools

Meta’s newly acquired Manus introduced Design View, allowing users to select specific parts of AI-generated images and edit them with targeted prompts rather than regenerating entire images. This addresses a key frustration in AI design workflows where small changes previously required starting over completely. The tool works across desktop and mobile, letting designers maintain lighting and composition while precisely modifying individual elements like furniture colors or adding specific objects.

Introducing Manus Design View https://manus.im/blog/manus-design-view

Meta acquires Manus AI for estimated $1-2 billion after record growth

Manus became the fastest startup ever to reach $100 million in annual recurring revenue, achieving this milestone in just eight months after launch. The acquisition gives Meta a proven AI agent platform that has processed 147 trillion tokens and created 80 million virtual computers, addressing Meta’s need for competitive consumer AI products. Early investor Benchmark likely achieved an 8-12x return in under a year, highlighting the premium valuations in today’s AI market.

Fun fact: Manus is currently SOTA on the Remote Labor Index (RLI) benchmark that @scale_AI and @ai_risks released earlier this year. https://x.com/alexandr_wang/status/2005766785237410107

Manus Joins Meta: Accelerating AI Innovation for Businesses | Meta for Business https://www.facebook.com/business/news/manus-joins-meta-accelerating-ai-innovation-for-businesses

Manus Update: $100M ARR, $125M revenue run-rate https://manus.im/blog/manus-100m-arr

Meta just acquired Manus AI. Ramp Sheets modeled it out: Estimated price: $4-6B based on AI M&A comps Fastest to $100M ARR in history (8 months) Benchmark likely 8-12x’d in under a year https://x.com/RampLabs/status/2005807066351325470

Meta just bought Manus for >$1B and it makes sense. ~8 Consumer AI apps hit $100M+ arr that aren’t big labs: Perplexity: $20B ElevenLabs: $6.6B Lovable: $6.6B Replit: $3B+ Suno: $2.5B Gamma: $2.1B Character: $1B+ Manus: $500M Meta AI has ~no product. This was the cheapest, and https://x.com/deedydas/status/2005798365733478490

Meta just bought the fastest-growing AI agent company in history for what’s probably $1-2B. The math tells you exactly why Zuckerberg did this deal today. Manus hit $100M ARR in eight months. That’s faster than ChatGPT, faster than Midjourney, faster than any AI product ever.”” / X https://x.com/aakashgupta/status/2005815184976417117

Meta and Hugging Face launch universal standard for AI agent training

OpenEnv creates a single framework where AI agents can be trained on any platform and deployed anywhere without modification, potentially ending the current fragmentation where each company uses incompatible systems. This standardization could accelerate AI agent development by letting researchers and companies share tools and environments seamlessly.

OpenEnv: Meta × Hugging Face’s new open standard for agentic environments. Why it matters: One environment spec and it works everywhere. Train and inference. – Train with TRL, TorchForge, verl, SkyRL, Unsloth – Deploy with the same env you trained on – MCP tool support baked in https://x.com/ben_burtenshaw/status/2005655406522085482

MiniMax releases M2.1 model with enhanced multi-language programming capabilities

Chinese AI company MiniMax launched M2.1, an open-source coding model that excels across multiple programming languages including Rust, Java, and C++, addressing real-world development needs beyond Python-focused competitors. The model demonstrates industry-leading performance on multi-language benchmarks and costs one-tenth the price of Claude Opus while matching or exceeding Claude Sonnet 4.5 on specialized coding tasks. Early adoption by major AI coding platforms like Cline, Factory AI, and BlackBox AI suggests strong market validation for enterprise development workflows.

MiniMax M2.1: Significantly Enhanced Multi-Language Programming, Built for Real-World Complex Tasks – MiniMax News https://www.minimax.io/news/minimax-m21

Shoutout @theo for today’s live stream using M2.1! 🙌 Loved watching a real dev put it through in real-time! Key Takeaways: “M2.1 is really good at long-horizon tasks and could generate surprisingly good results” Best part? It’s 1/10 the price of Opus. Ready to be surprised? https://x.com/MiniMax_AI/status/2003673337671602378

Nvidia acquires AI chip startup Groq for $20 billion cash

Nvidia’s largest deal ever targets Groq’s ultra-fast inference chips that compete directly with Nvidia’s own GPUs for AI workloads. The acquisition brings aboard Groq’s founding team, including creators of Google’s tensor processing unit, while Groq continues operating independently under new leadership. This represents a strategic move to consolidate AI chip competition as demand for faster, cheaper AI processing explodes.

Groq and Nvidia Enter Non-Exclusive Inference Technology Licensing Agreement to Accelerate AI Inference at Global Scale | Groq is fast, low cost inference. https://groq.com/newsroom/groq-and-nvidia-enter-non-exclusive-inference-technology-licensing-agreement-to-accelerate-ai-inference-at-global-scale

Nvidia buying AI chip startup Groq for about $20 billion, biggest deal https://www.cnbc.com/2025/12/24/nvidia-buying-ai-chip-startup-groq-for-about-20-billion-biggest-deal.html

Robots learn real-world skills using only simulated training data

Researchers achieved “zero-shot” deployment where robots trained entirely in Isaac Lab simulation performed real-world tasks without any actual robot training data. This breakthrough could dramatically reduce the time and cost of robot development by eliminating the need for expensive real-world training sessions. The approach represents a major advance in “sim-to-real” transfer, potentially accelerating robotics adoption across industries.

Visual sim2real: zero-shot deploy to the real world, with zero real data. Trained entirely in Isaac Lab. https://x.com/DrJimFan/status/2003879976173818298

Robotics lags decades behind AI despite massive language model breakthroughs

While AI systems can now write Nobel-worthy text, robots still struggle with basic physical tasks that animals master effortlessly. This “Moravec’s paradox” suggests that manipulating the physical world remains AI’s hardest unsolved challenge, potentially requiring entirely different approaches than those powering today’s chatbots.

I’m on a singular mission to solve the Physical Turing Test for robotics. It’s the next, or perhaps THE last grand challenge of AI. Super-intelligence in text strings will win a Nobel prize before we have chimpanzee-intelligence in agility & dexterity. Moravec’s paradox is a https://x.com/DrJimFan/status/2003879965369290797

OpenAI tests whether AI systems can reliably monitor their own reasoning

OpenAI researchers found that AI models can detect flawed reasoning in other AI systems about 60% of the time, but struggle to catch their own mistakes. This matters because as AI systems become more autonomous, we need reliable ways to verify their decision-making processes, and self-monitoring appears insufficient for safety oversight.

Evaluating chain-of-thought monitorability | OpenAI https://openai.com/index/evaluating-chain-of-thought-monitorability/

OpenAI publishes explicit rules governing how its AI models behave

The company released its “Model Spec” detailing specific behavioral guidelines, priorities, and tradeoffs that determine how ChatGPT and other models respond to users. This transparency move helps explain why AI systems make certain choices and includes new protections for teenage users, marking a shift from vague principles to concrete operational rules.

Today @OpenAI updated the Model Spec, laying out how models are ‘intended to behave.’ Not marketing. Just explicit rules, priorities, and tradeoffs. Great reading if you’re wondering why models respond the way they do. Changelog + teen protections in 🧵👇 https://x.com/shaunralston/status/2001744269128954350

OpenAI hires head of preparedness as AI models pose mental health risks

OpenAI is creating a new executive role focused on preparing for AI safety challenges, specifically citing concerns about how advanced models might affect users’ mental health. This signals growing recognition among leading AI companies that their increasingly capable systems require dedicated oversight beyond technical development, marking a shift from reactive to proactive safety management.

We are hiring a Head of Preparedness. This is a critical role at an important time; models are improving quickly and are now capable of many great things, but they are also starting to present some real challenges. The potential impact of models on mental health was something we”” / X https://x.com/sama/status/2004939524216910323?s=20

OpenAI lets users customize ChatGPT’s personality and communication style

Users can now adjust ChatGPT’s warmth, enthusiasm, and emoji usage through new personalization settings, marking a shift from one-size-fits-all AI interactions to customizable digital assistants. This feature addresses a key user complaint about AI feeling impersonal and could influence how people form relationships with AI systems in daily use.

You can now adjust specific characteristics in ChatGPT, like warmth, enthusiasm, and emoji use. Now available in your “”Personalization”” settings. https://x.com/OpenAI/status/2002099459883479311

OpenAI sells 700,000 ChatGPT licenses to US universities

Over 35 public universities have adopted ChatGPT for students and faculty, generating 14 million uses in September alone and outpacing Microsoft’s competing Copilot tool. This marks the first major institutional adoption of AI chatbots in higher education, suggesting universities are moving beyond pilot programs to full-scale integration of AI tools in academic work.

Documents: OpenAI has sold 700K+ ChatGPT licenses to ~35 US public universities for students and faculty, who used it 14M+ times in September, beating Copilot (Bloomberg) https://x.com/Techmeme/status/2001633781388648559

OpenAI built world’s #1 Android app in just 18 days using AI coding assistant

OpenAI’s Sora video generation app reached the top global app ranking after being developed in under three weeks with help from their Codex AI programming tool. This demonstrates how AI coding assistants can dramatically accelerate software development timelines, potentially reshaping how quickly companies can bring new products to market. The speed achievement is particularly notable given the app’s immediate global success and complex video generation capabilities.

OpenAI built the Sora Android app (which hit #1 app in the world) in just 18 days with the help of Codex https://x.com/lennysan/status/2001074732293300301

OpenAI partners with Energy Department to accelerate scientific research

The collaboration expands beyond previous national lab work to support a broader “Genesis Mission” aimed at using AI for scientific breakthroughs. This represents a deepening of public-private AI partnerships in critical research areas, moving from isolated projects to systematic scientific acceleration across government priorities.

OpenAI and the U.S. Department of Energy are expanding their collaboration on AI and advanced computing in support of national scientific priorities. The agreement builds on our work with DOE’s national labs and advances the Genesis Mission to accelerate scientific discovery.”” / X https://x.com/OpenAINewsroom/status/2001731892253724689

Redditor uses ChatGPT to correctly diagnose sciatic pain from MRI

A Reddit user reportedly had ChatGPT analyze their MRI scan and accurately identify the source of their leg pain, suggesting AI chatbots may soon assist with medical diagnosis. While promising, this represents an uncontrolled anecdote rather than clinical validation, and highlights both AI’s diagnostic potential and the risks of patients self-diagnosing with unregulated tools.

A Redditor fed his MRI into ChatGPT and it appears to have correctly identified the cause of his sciatic leg pain. This could be a watershed moment for AI. https://x.com/reddit_lies/status/2003512194672025826

SoftBank completes massive $40 billion investment in OpenAI startup

SoftBank fulfilled its record-breaking funding commitment to OpenAI by sending a final $22.5 billion last week, giving the Japanese conglomerate an 11% stake in the ChatGPT maker at a $260 billion valuation. The investment represents one of the largest single bets on AI infrastructure, requiring SoftBank to sell its entire $5.8 billion Nvidia stake and other assets to raise cash. This funding will help OpenAI cover soaring costs for training AI models and building data centers as competition intensifies with Google’s rival AI systems.

Softbank fulfills $40 billion OpenAI backing, sources tell CNBC https://www.cnbc.com/2025/12/30/softbank-openai-investment.html?taid=6953e10b1534bb0001d46587

SoftBank races to fulfill $22.5 billion funding commitment to OpenAI by year-end https://finance.yahoo.com/news/exclusive-softbank-races-fulfill-22-233202534.html

Chinese open-source AI models now trail US closed models by just seven months

This shrinking gap from over a year to seven months shows China’s accelerating AI development, with open-source releases like DeepSeek matching capabilities of proprietary US systems much faster than before. The trend suggests Chinese researchers are rapidly closing the technical divide while making their advances freely available, potentially reshaping global AI competition dynamics.

A seven to eight month gap between released closed US models and released Chinese open weights models continues to be a good rule of thumb, as it has been all year.”” / X https://x.com/emollick/status/2003217274510143709

Odyssey launches AI system that predicts world physics from video

Odyssey-2 Pro learns to simulate real-world physics and causality by training on massive video datasets, predicting how scenes evolve frame-by-frame rather than using hand-coded rules. This approach could replace specialized simulators across industries with a single general-purpose system that maintains long-term memory and responds to user interactions in real-time. The company positions this as early progress toward “world simulators” that understand cause-and-effect relationships spanning minutes or hours.

The dawn of a world simulator https://odyssey.ml/the-dawn-of-a-world-simulator

xAI expands safety team to tackle AI alignment and catastrophic risks

Elon Musk’s xAI is actively recruiting machine learning experts for its safety division, focusing on post-training reinforcement learning and preventing dangerous AI behaviors. This hiring push signals growing industry recognition that advanced AI systems require dedicated safety oversight, particularly as companies race to develop more powerful models that could pose unprecedented risks.

We’re hiring for the safety team at xAI We work on RL post-training, alignment/model behavior, and reducing catastrophic risk This work is incredibly high impact If you have strong ML skills and this sounds exciting to you, DM me https://x.com/StewartSlocum1/status/2005710683623809440

0 AI Visuals and Charts: Week Ending December 26, 2025

No entries found.

Top 2 Links of The Week – Organized by Category

Anthropic

My talk from the @aiDotEngineer Code Summit is out! 🚨 “”How Claude Code Works”” and what we can learn about frontier agent architectures. Coding agents are suddenly really really good, and I’m trying to understand why. In short: better models, simple loop design, and bash tools https://x.com/imjaredz/status/2005731826699063657

AutonomousVehicles

Waymo dropped a blog that effectively confirms the “dependency trap” I tweeted about. The SF incident happened because of a “backlog” of “confirmation checks” requiring remote human operators. Humans is a module in Waymo’s stack. That module does not scale. https://x.com/Yuchenj_UW/status/2003708815934640536

Leave a Reply