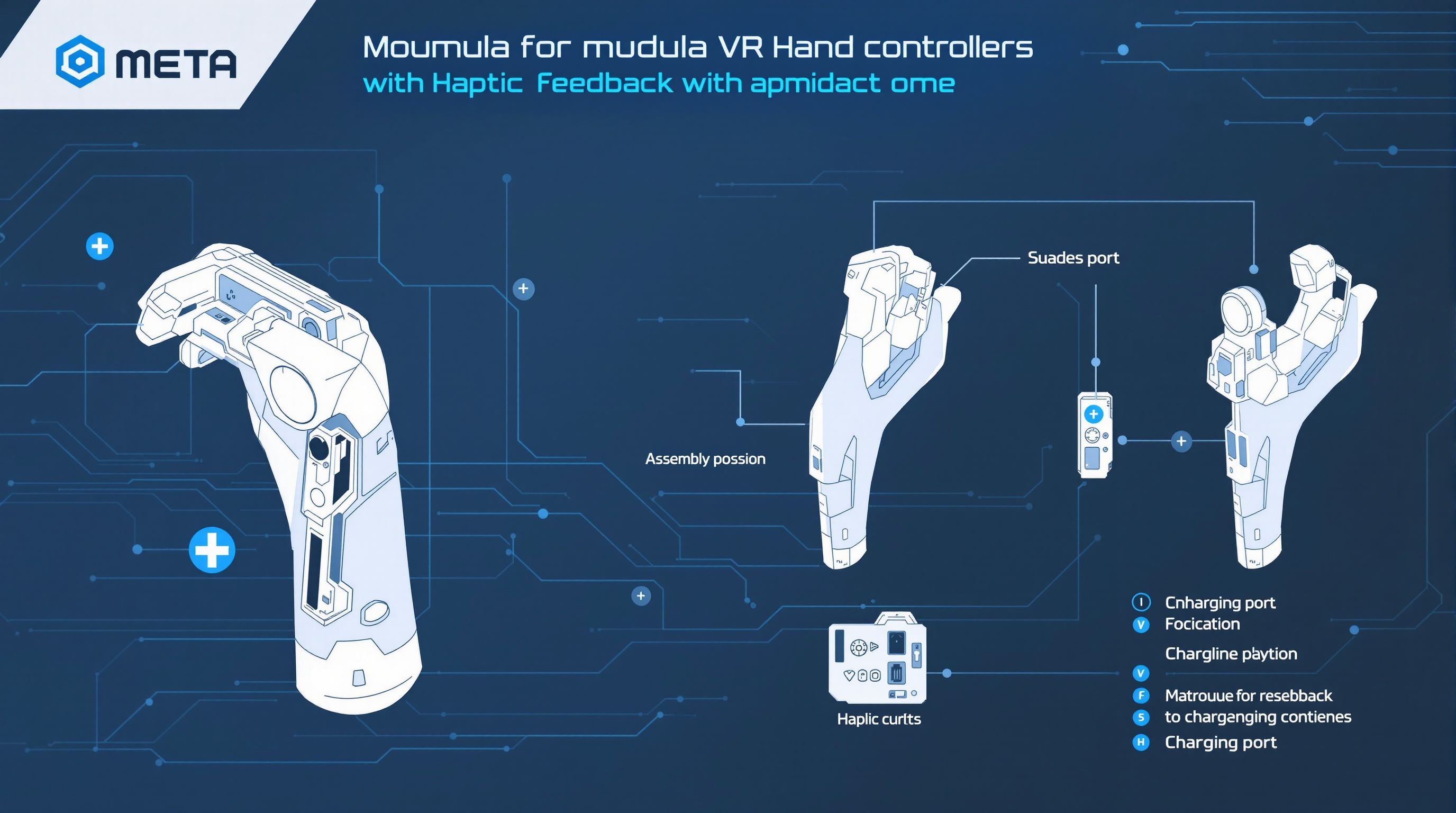

Image created with Flux Pro v1.1 Ultra. Image prompt: Assembly instruction diagram for modular VR hand controllers with haptic feedback, modern gaming tech style, blue gradient and white colors, clean tech background, “META” in modern rounded font, sensor placement indicators, charging port positions shown

DOGE Used a Meta AI Model to Review Emails From Federal Workers | WIRED https://archive.md/Joty1

Meta shuffles AI, AGI teams to compete with OpenAI, ByteDance, Google https://www.axios.com/2025/05/27/meta-ai-restructure-2025-agi-llama

I built an AI Agent that > takes all my competitors Meta Ads > transcribes them > repurposes them > add them to a slack channel > ready to generate in https://t.co/ArtDvdEBf2 comment “”ai agent”” to get access https://x.com/rom1trs/status/1925518833902633009

Finally completed and merged the SWE_RL environment that was described by Meta’s SWE RL paper into Atropos – A really difficult environment that can teach a model to be a much better coding agent! Check out the PR: https://x.com/Teknium1/status/1927089897833140647

DeepSeek’s R1 leaps over xAI, Meta and Anthropic to be tied as the world’s #2 AI Lab and the undisputed open-weights leader DeepSeek R1 0528 has jumped from 60 to 68 in the Artificial Analysis Intelligence Index, our index of 7 leading evaluations that we run independently https://x.com/i/web/status/1928071179115581671

So so so cool. Llama 1B batch one inference in one single CUDA kernel, deleting synchronization boundaries imposed by breaking the computation into a series of kernels called in sequence. The *optimal* orchestration of compute and memory is only achievable in this way.”” / X https://x.com/karpathy/status/1927506788527591853

Ollama can now think! 🤔🤔🤔 For thinking models, and especially useful for very thoughtful models like DeepSeek-R1-0528, Ollama can separate the thoughts and the response. Thinking can also be disabled! This is useful for getting a direct response. This works across https://x.com/ollama/status/1928543644090249565

Meta understood that copying DeepSeek piecemeal is not working, and decided to copy the org structure, creating an internal AGI division. a cruel rhyme from Russian school program comes to mind “”And you, my friends, no matter your positions, Will never be musicians!”” https://x.com/i/web/status/1927944123358581182

Just FYI all the reports from our RL experiments have not been on Qwen, they’ve been on Llama (DeepHermes 8B) – so hopefully that gives some additional assurance on the impact RL can have and that its not random god-mode qwen math improvements from randomness”” / X https://x.com/i/web/status/1928184393035559191

RAG is dead, long live agentic retrieval! At LlamaIndex we’ve been saying for a long time that naive RAG is not enough for a modern application. Following from that conviction, we’ve built agentic strategies directly into LlamaCloud that you can adopt with just a few lines of https://x.com/llama_index/status/1928142249935917385