A lot of people rhetorically wonder what the plan is for Elon Musk’s Grok. In particular, Ethan Mollick. From what I’ve heard from Elon on podcasts and in his posts, Elon is taking “the Jim Fan” approach to AI – which is more ambitious than generative language. Jim Fan is training robots in world-model simulations. Once robots can execute a task in 10,000 world models, the “real world” is introduced as model 10,001. The robots don’t know the difference – like Ender’s Game.

In Musk’s case, I think he is working to integrate a few pillars:

1) Grok is a language model. But there are plenty of training tokens available in open data sets and the internet. X is not too special there. Rather, Grok is using Twitter/X to train to specialize in real-time data retrieval. That’s where X is a solid training ground – even if X is full of garbage, Grok’s ‘personality’ is not training exclusively on X tokens. The real-time stream is a well-built dojo for real life integration.

2) Every Tesla is teaching every other Tesla everything it knows. More importantly, every Tesla is teaching the world-simulator model to train Teslas (like Jim Fan’s NVIDIA lab). Just like Meta released Segment Anything, the concepts of depth, object tracking, and segmentation are critical to embodied machines.

3) Optimus robots will embody both the lessons from Grok and Tesla. And challenge OpenAI’s 1X/Neo, NVIDIA’s Eureka/Project Groot, and Brett Adcock‘s Figure.

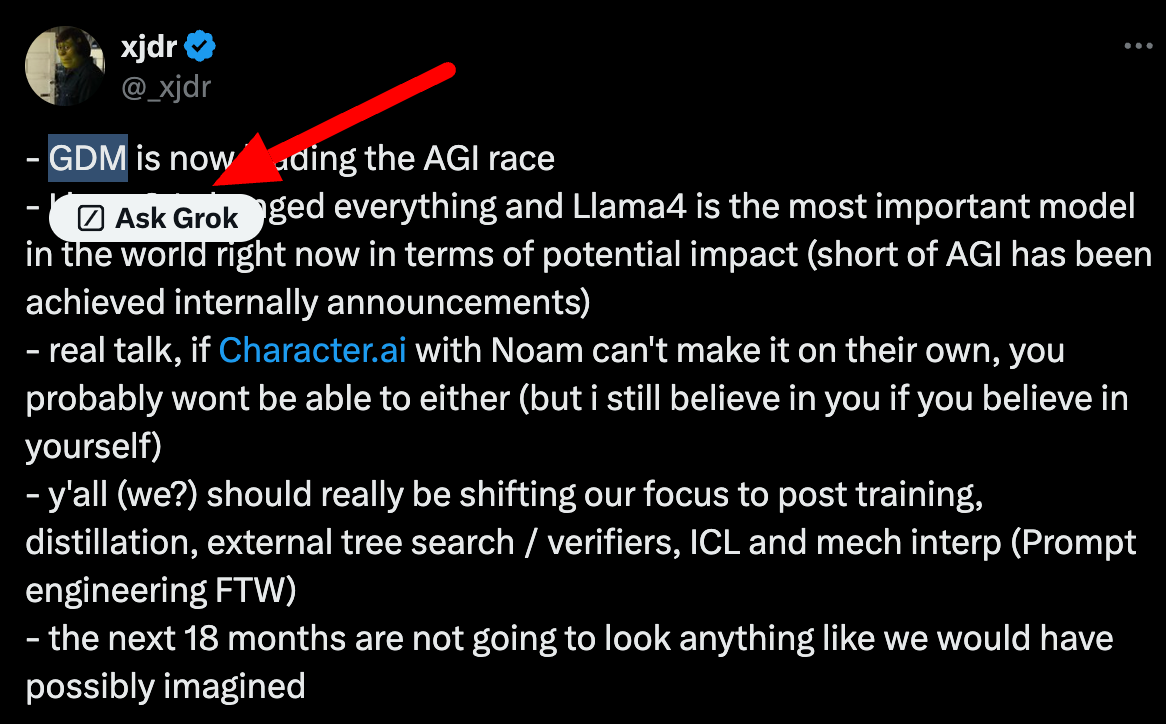

In the meantime, this is the first time I’ve seen Grok actually be helpful on Twitter. I selected text to search for it on Google, and Grok jumped in to help me. It succeeded! So points to Grok on that one.

Leave a Reply