Ethics/Legal Security

OpenAI announced an early warning system and released research on if AI models can aid in creating bioweapons. The research finds that they are currently at most’ mildly useful for the task…

In the largest-of-its-kind evaluation, we found that GPT-4 provides, at most, a mild uplift in biological threat creation accuracy (see dark blue below.) While not a large enough uplift to be conclusive, this finding is a starting point for continued research and deliberation.

Evaluations for LLM-assisted biological threat creation. Current models not very capable at this task, but we want to be ahead of the curve for assessing this and other potential future risk areas:

FCC moves to outlaw AI-generated robocalls

This guy trained a bot to swipe on Tinder profiles based on his preferences, and then used ChatGPT to message them and set up dates. He communicated with 5,200+ women – and one year later, he’s now engaged to one of them (after ChatGPT suggested he propose).

Mastercard jumps into generative AI race with model it says can boost fraud detection by up to 300%

AI poisoning tool Nightshade received 250,000 downloads in 5 days: ‘beyond anything we imagined’

China approves over 40 AI models for public use in past six months

White House science chief signals US-China co-operation on AI safety

Former Rep. Will Hurd (R-Texas) said in an op-ed Tuesday that he was “freaked out” by a briefing while serving on the board of ChatGPT-maker OpenAI and called for guardrails on the development of “artificial general intelligence (AGI).”

Should 4 People Be Able to Control the Equivalent of a Nuke?

As artificial intelligence becomes more science fact than science fiction, its governance can’t be left to the whims of a few people.

AI Hubs Are Few and Far Between

U.S. AI Hubs are Concentrated in a Few Cities

AI companies will need to start reporting their safety tests to the US government

X/Twitter Restores Searches for Taylor Swift After Temporary Block in Response to Flood of Explicit AI Fakes

George Carlin’s Estate Sues Podcasters Over A.I. Episode

The lawsuit claims that an hourlong comedy special on YouTube violated Carlin’s copyright.

In support of efforts to create safe and trustworthy artificial intelligence (AI), NIST is establishing the U.S. Artificial Intelligence Safety Institute (USAISI). To support this Institute, NIST has created the U.S. AI Safety Institute Consortium. The Consortium brings together more than 200 organizations to develop science-based and empirically backed guidelines and standards for AI measurement and policy, laying the foundation for AI safety across the world. This will help ready the U.S. to address the capabilities of the next generation of AI models or systems, from frontier models to new applications and approaches, with appropriate risk management strategies.

FTC investigating Microsoft, Amazon, and Google investments into OpenAI and Anthropic

How hard is it to cheat in technical interviews with ChatGPT? We ran an experiment.

AI and crypto mining are driving up data centers’ energy use

It seems one issue with copyright characters & image generation is that some images are just ubiquitous.

Even if you only train on licensed data, Mario appears everywhere, including in completely legal screenshots, billboards, film frames, t-shirts, etc. How do you scrub that?

Executives from Meta, X, TikTok, Snap and Discord are testifying before the Senate Judiciary Committee about safeguarding children on their respective platforms. Considering these are the companies driving a lot of AI, it’s important to keep an eye on their (lack of) ethics.

Universal Music Group, the label representing artists such as Taylor Swift, Billie Eilish and Ariana Grande, says that it’ll pull its music from TikTok after failing to reach a deal with ByteDance over royalties. Similarly to the Senate committee story from this week, the way publishers see tech companies will impact AI’s ability to draw from content… like music in algorithms.

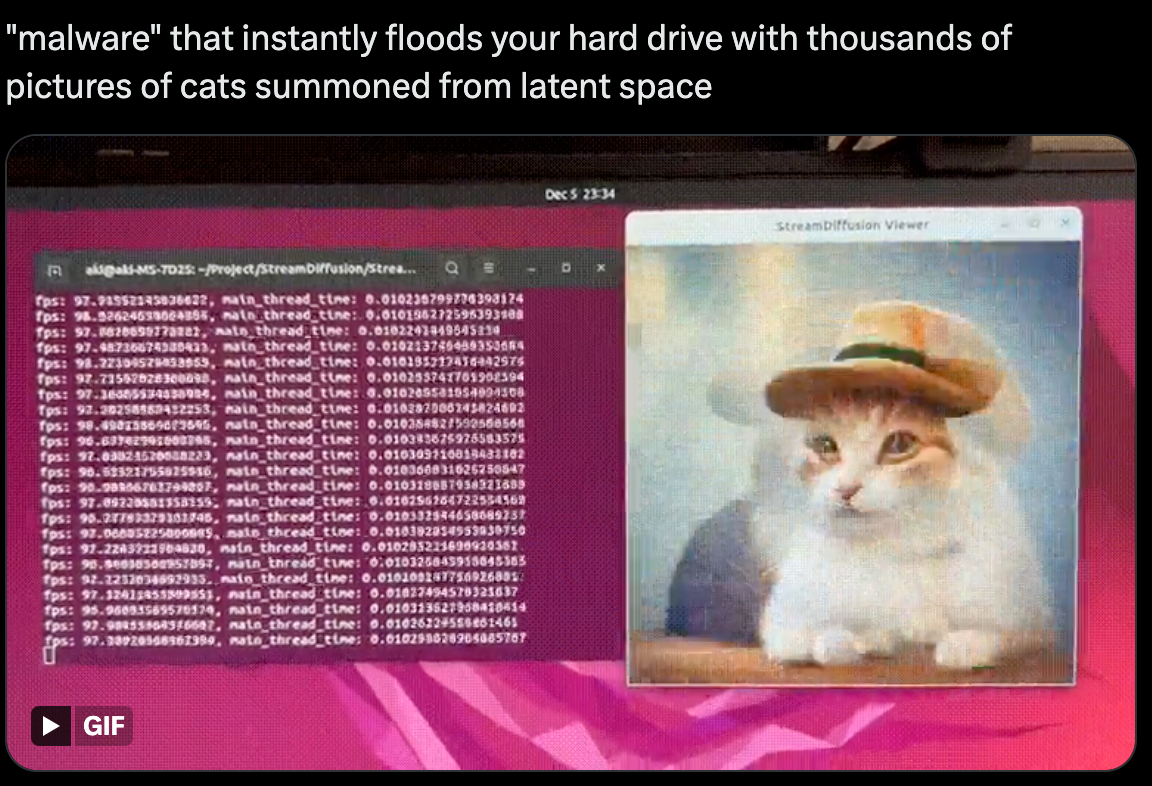

“malware” that instantly floods your hard drive with thousands of pictures of cats summoned from latent space

The security risks of products using LLMs are vast. PromptArmor (YC W24) protects LLM applications from data exfiltration, phishing, and system manipulation through anomaly detection, heuristics, and models.

I have written about “secret cyborgs” – people who use AI to do work, but don’t tell anyone else.

This paper shows one reason for the secrecy: people preferred the creative summaries written by AI, until they were told it was AI, which changed their minds about how good it was.

Many AI Safety Orgs Have Tried to Criminalize Currently-Existing Open-Source AI

We’re Building a Solution with IBM Consulting to Improve Transparency and Auditability for Generative AI Systems

ChatGPT is violating Europe’s privacy laws, Italian DPA tells OpenAI

Italy puts ChatGPT on notice for alleged privacy missteps (yes, again)

From West to the Rest: Growing Geographic Dispersion of AI Jobs in America

The NAIRR Pilot aims to connect U.S. researchers and educators to computational, data, and training resources needed to advance AI research and research that employs AI. Federal agencies are collaborating with government-supported and non-governmental partners to implement the Pilot as a preparatory step toward an eventual full NAIRR implementation.https://nairrpilot.org/

Be Sure To Read “This Week In AI”

This week’s executive overview and top links are here:

AI News #18: Week Ending 02/02/2024 with Executive Summary and Top 12 Stories

The post you just read is an extension of my weekly newsletter, This Week In AI, an executive summary of the top things to know in AI. Each week, I create an accessible overview for laypeople to feel confident they are conversant with the week’s AI developments. I include a curated list of must-click links of the week, to offer everyone a hands-on opportunity to explore the most intriguing updates in artificial intelligence across various categories, including robotics, imagery, video, AR/VR, science, ethics, and more. Beyond the overview, I post these topic-based deeper dives (below). If you haven’t read this week’s overview, I recommend starting there.

- Agents/Copilots

- Artificial General Intelligence (AGI)

- Augmented and Virtual Reality (AR/VR)

- Autonomous Vehicles

- AI Audio

- Business and Enterprise AI

- Chips and Hardware

- Consumer Products

- Education

- Ethics/Legal Security

- Images/Photos

- Locally Run AI Models

- Meta

- OpenAI

- Open Source

- Podcasts/YouTube

- Publishing and News

- Robots and Embodiment

- Science and Medicine

- Video

- Vision/Multimodality

- X/Twitter/Grok

- Tech and Development

Credits/Sources

Most of these links come from just a few incredible sources. Please follow them:

- Robert Scoble: https://x.com/Scobleizer

- Ethan Mollick: https://www.linkedin.com/in/emollick/

- David Armano: https://www.linkedin.com/in/darmano/

- Alan Thompson: https://lifearchitect.ai/

- Theoretically Media: https://www.youtube.com/@TheoreticallyMedia

- The Rundown: https://www.therundown.ai/

- Borriss: https://twitter.com/_Borriss_

- Bilawal Sidhu: https://twitter.com/bilawalsidhu/

- TLDR: https://tldr.tech/ai

- Jeremiah Owyang: https://twitter.com/jowyang

- Wes Roth: https://www.youtube.com/@WesRoth

Previous Issues

- AI News: Week Ending 01/26/2024: https://ethanbholland.com/2024/02/06/ai-news-17-week-ending-01-26-2024-with-executive-summary-and-top-12-stories/

- AI News: Week Ending 01/19/2024: https://ethanbholland.com/2024/02/04/ai-news-16-week-ending-01-19-2024-with-executive-summary-and-top-16-stories/

- AI News: Week Ending 01/12/2024: https://ethanbholland.com/2024/01/15/ai-news-15-week-ending-01-012-2024-with-executive-summary-and-top-13-stories/

- AI News: Week Ending 01/05/2024: https://ethanbholland.com/2024/01/14/ai-news-14-week-ending-01-05-2023-with-executive-summary-and-top-16-stories/

- AI News: Week Ending 12/29/2023: https://ethanbholland.com/2024/01/07/ai-news-12-week-ending-12-29-2023-with-executive-summary-and-top-9-stories/

- AI News: Week Ending 12/22/2023: https://www.linkedin.com/pulse/ai-news-week-ending-12222023-executive-summary-top-links-holland-frx4e

- AI News: Week Ending 12/15/2023: https://www.linkedin.com/pulse/ai-news-week-ending-12152023-ethan-holland-elmee

- AI News: Week Ending 12/08/2023: https://www.linkedin.com/pulse/ai-news-week-ending-12082023-ethan-holland-zabve

- AI News: Week Ending 12/01/2023: https://www.linkedin.com/pulse/ai-news-week-ending-12012023-ethan-holland-rglve

- AI News: Week Ending 11/24/2023: https://www.linkedin.com/pulse/ai-news-week-ending-11242023-ethan-holland-jqvre

- AI News: Week Ending 11/17/2023: https://www.linkedin.com/pulse/ai-news-week-ending-11172023-ethan-holland-ad6le

- AI News: Week Ending 11/10/2023: https://www.linkedin.com/pulse/ai-news-week-ending-11102023-executive-summary-top-three-holland-yjdef/

- AI News: Week Ending 11/03/2023: https://www.linkedin.com/posts/ethanholland_aiebh-ai-generativeai-activity-7131396231678844928-3U8M

- AI News: Week Ending 10/27/2023: https://www.linkedin.com/posts/ethanholland_aiebh-ai-generativeai-activity-7127139342321356800-uBPD

- AI News: Week Ending 10/20/2023: https://www.linkedin.com/pulse/ai-news-week-ending-10202023-ethan-holland-eocpe

- AI News: Week Ending 10/13/2023: https://www.linkedin.com/pulse/ai-news-week-ending-10132023-executive-summary-ethan-holland-nq9bf

- AI News: Week Ending 10/6/2023: https://www.linkedin.com/pulse/ai-news-week-ending-1062023-ethan-holland-b6uhe

Leave a Reply