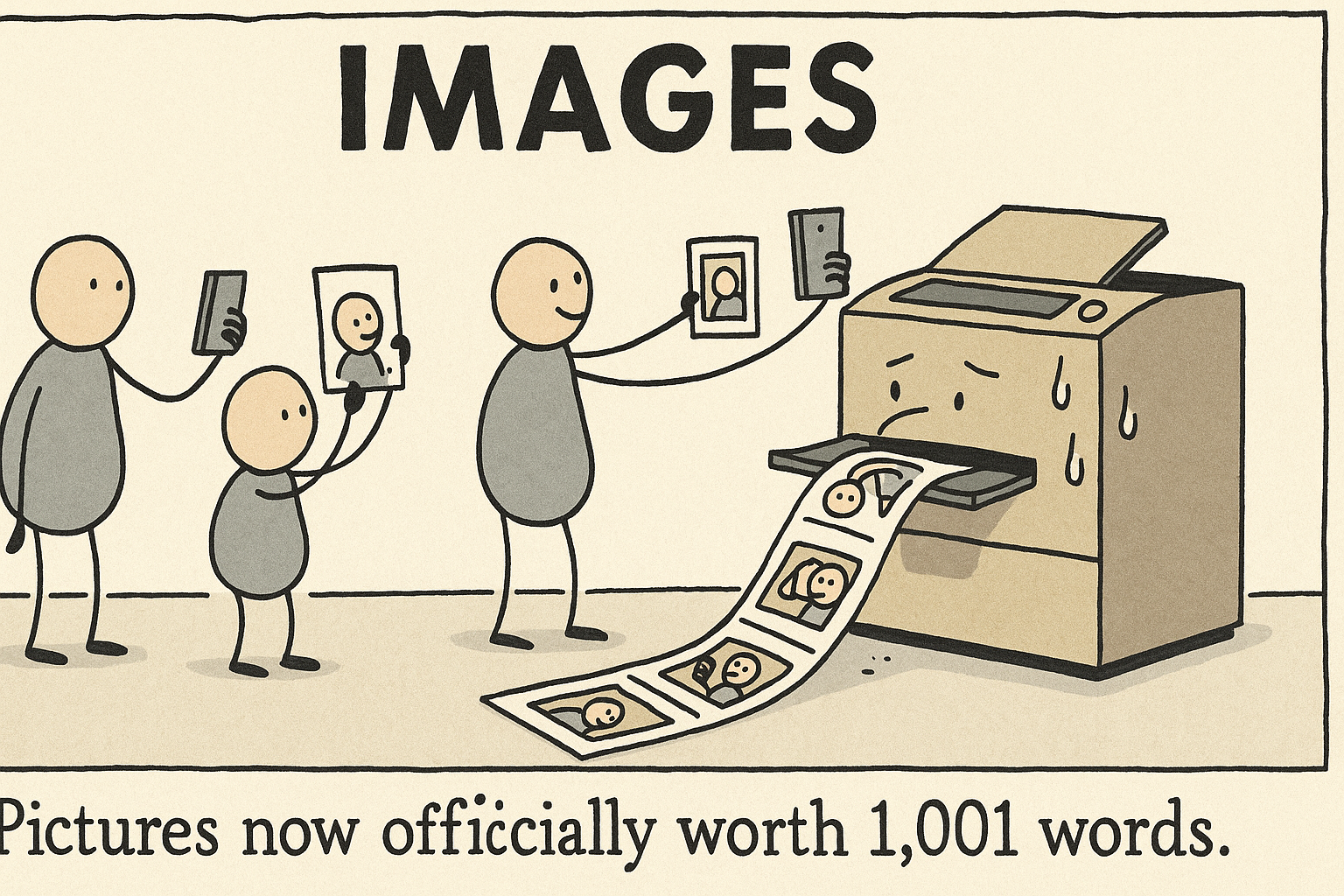

Image created with OpenAI gpt-image-1. Image prompt: Single-panel cartoon with loose, hand‑inked lines, bean‑bodied figures, muted flat colors, minimal props, and deadpan humor: Overworked photocopier sweats as endless selfies march off the output tray and pose for more selfies. Large bold title text centered at top: “IMAGES” Muted colors, flat shading, black ink outlines. 16:9. Caption: “Pictures now officially worth 1,001 words.”

“Image gen is now available in the API! We’re launching gpt-image-1, making ChatGPT’s powerful image generation capabilities available to developers worldwide starting today. ✅ More accurate, high fidelity images 🎨 Diverse visual styles ✏️ Precise image editing 🌎 Rich world https://x.com/OpenAIDevs/status/1915097067023900883

“More cool things about imagegen in the API—developers can control: * moderation sensitivity * image quality/generation speed * quantity of images generated * whether the background is transparent or opaque * output format (jpeg, png, webp)” / X https://x.com/kevinweil/status/1915103388993302646

“Nvidia just released Describe Anything 3B – Multimodal LLM for Detailed Localized Image and Video Captioning ⚡ > integrates full-image/ video context with fine-grained local details using a focal prompt and a localised vision backbone with gated cross-attention DAM-3B > https://x.com/reach_vb/status/1914962078571356656

“Nvidia presents Eagle 2.5! – A family of frontier VLMs for long-context multimodal learning – Eagle 2.5-8B matches the results of GPT-4o and Qwen2.5-VL-72B on long-video understanding https://x.com/arankomatsuzaki/status/1914517474370052425

Adobe adds AI models from OpenAI, Google to its Firefly app | Reuters https://www.reuters.com/business/adobe-adds-ai-models-openai-google-its-firefly-app-2025-04-24/

“💥 We just launched ChatGPT’s imagegen model in the API! It went viral in ChatGPT, and our API offers even greater parameter control to match diverse developer use cases. Also, we doubled rate limits for o3 and o4-mini for ChatGPT Plus users. Happy Wednesday 🌞” / X https://x.com/kevinweil/status/1915103387592409215

“We’ve been collaborating closely with developers to understand where image gen can be most useful in the real world. Here are some examples from early adopters across domains like creative tools, consumer apps, enterprise software, and more below. 👇” / X https://x.com/OpenAIDevs/status/1915097072107143322

“New foundation model on image and video captioning just dropped by @NVIDIAAI 🔥 Describe Anything Model (DAM) is a 3B vision language model to generate detailed captions with localized references 😮 The team released the models, the dataset, a new benchmark and a demo 🤩 https://x.com/mervenoyann/status/1914980803055862176

“when your geometry sucks but you have RTX 5090 graphics” / X https://x.com/bilawalsidhu/status/1914438972639506453

“Adobe announced DRAGON on Hugging Face Distributional Rewards Optimize Diffusion Generative Models https://x.com/_akhaliq/status/1914602497148154226

“Don’t sleep on this! 🔥 @Meta dropped swiss army knives for vision with A2.0 license ❤️ > image/video encoders for vision language and spatial understanding (object detection etc) > VLM outperforms InternVL3 and Qwen2.5VL 🔥 > Gigantic video and image datasets 👏 https://x.com/mervenoyann/status/1915723394701467909

Adobe Firefly: The next evolution of creative AI is here | Adobe Blog https://blog.adobe.com/en/publish/2025/04/24/adobe-firefly-next-evolution-creative-ai-is-here

“🩵 we will work hard to make much more beautiful creations!” / X https://x.com/sama/status/1913076070007677017

“you can generate images on perplexity now. the UI is cute and fun. we have also added support for grok 3 and o4-mini for model selection options (which already supports gemini 2.5 pro, claude 3.7, perplexity sonar, gpt-4.1, deepseek r1 1776), and looking into supporting o3 as https://x.com/AravSrinivas/status/1915820052571689245

Image segmentation using Gemini 2.5 https://simonwillison.net/2025/Apr/18/gemini-image-segmentation/

[2504.13987] Entropy Rectifying Guidance for Diffusion and Flow Models https://arxiv.org/abs/2504.13987

“To start using gpt-image-1, verify your organization: https://x.com/OpenAIDevs/status/1915097084308410816

“They also generate a dataset by extending existing segmentation and referring expression generation datasets like REFCOCO, by passing in the images and classes to VLMs and generating captions ⤵️ https://x.com/mervenoyann/status/1914980810970603797

“⚡️ Massive Update Just Dropped: Phase 2.0 for Kling AI! 🎥 KLING 2.0 Master for video generation, 🖼️ KOLORS 2.0 for image generation, 🎮 Multi-Elements Editor, 🎨 Image Editing & Restyle… Kling AI 2.0 is all about empowering creators to bring meaningful stories to life — with https://x.com/Kling_ai/status/1912040247023788459

“You can transform any document into LLM-ready formats using MegaParse. > PDF, Powerpoint, Word > Tables, TOC, Headers, Footers, Images Fully open-source python library. https://x.com/LiorOnAI/status/1915792212157407385

“@WenhuChen @WeijiaShi2 at a glance, this is quite diff than what we did fwiw. the behaviors you see in o3 and o4-mini are all emergent from large-scale RL. we just give them access to python and the ability to manipulate images, the rest is up to the model” / X https://x.com/mckbrando/status/1912704921016869146

[2504.13162v1] Personalized Text-to-Image Generation with Auto-Regressive Models https://arxiv.org/abs/2504.13162v1

“Perception Encoder (PE) Core is a new state-of-the-art family of vision encoders that can be aligned for both vision-language and spatial tasks 👏 For zero-shot image tasks, it outperforms latest sota SigLIP2 🔥 https://x.com/mervenoyann/status/1915723399642435634

“Vision models still do much worse than humans in being able to accurately answer questions about images, but progress has been rapid and o3 represents a big jump forward.” / X https://x.com/emollick/status/1914609409172418756

“Have you tested out the new LMArena in Beta yet? ⏰In less than 24 hours, the community response has been incredible – we’ve already made tweaks based on your feedback! You’ll notice: 🌔 Dark/Light mode toggle in the top right ✂️ Copy/paste images directly into the prompt https://x.com/lmarena_ai/status/1913260116465656220

“OmniV-Med: Scaling Medical Vision-Language Model for Universal Visual Understanding “First, we construct OmniV-Med-Instruct, a comprehensive multimodal medical dataset containing 252K instructional samples spanning 14 medical image modalities and 11 clinical tasks. Second, we https://x.com/iScienceLuvr/status/1914624649142657199

OpenAI has launched the ChatGPT Image Library – ChatGPT – OpenAI Developer Community https://community.openai.com/t/openai-has-launched-the-chatgpt-image-library/1230140

“Excited to release ReflectionFlow — a framework that enables text-to-image diffusion models to refine their own output through reflection 💬🔥 We release GenRef-1M, a large-scale dataset consisting of (good_img, bad_img, reflection) triplets. Through extensive experiments, we https://x.com/RisingSayak/status/1915338106510905767

[2409.03516v1] LMLT: Low-to-high Multi-Level Vision Transformer for Image Super-Resolution https://arxiv.org/abs/2409.03516v1

“Super excited to share Chipmunk 🐿️- training-free acceleration of diffusion transformers (video, image generation) with dynamic attention & MLP sparsity! Led by @austinsilveria, @SohamGovande – 3.7x faster video gen, 1.6x faster image gen. Kernels written in TK ⚡️🐱 1/” / X https://x.com/realDanFu/status/1914376553745850454

“Entropy Rectifying Guidance for Diffusion and Flow Models “1. We propose Entropy Rectifying Guidance (ERG), a guidance mechanism based on modifying the energy landscape of the attention layers. 2. Since our guidance mechanism does not require unconditional inference, it is https://x.com/iScienceLuvr/status/1914596593527087341

Image generation – OpenAI API https://platform.openai.com/docs/guides/image-generation?image-generation-model=gpt-image-1

“Figma is leveraging gpt-image-1 to generate and edit images from simple prompts, enabling designers to rapidly explore ideas and iterate visually directly in Figma. https://x.com/OpenAIDevs/status/1915097075722899818

“Dreamina AI has officially launched Seedream 3.0 – its cutting-edge new model. The wait is over! This is the latest breakthrough in AI image generation. With Seedream 3.0, Dreamina AI delivers: 🎬 Cinematic-quality visuals 🖼️ 2K resolution output 💎 Ultra-realistic textures & https://x.com/dreamina_ai/status/1914683899671875972

“GPT Image Generation Try the latest image generation model from OpenAI that supports contextual image generation and editing. https://x.com/perplexity_ai/status/1915819619333640647

“// Scaling Reasoning in Diffusion LLMs via RL // This new paper proposes d1, a two‑stage recipe that equips masked diffusion LLMs with strong step‑by‑step reasoning. • Two‑stage pipeline (SFT → diffu‑GRPO) – d1 first applies supervised fine‑tuning on the 1 k‑example s1K https://x.com/omarsar0/status/1912871174817939666

Introducing our latest image generation model in the API | OpenAI https://openai.com/index/image-generation-api/

“Introducing OpenAI o3 and o4-mini—our smartest and most capable models to date. For the first time, our reasoning models can agentically use and combine every tool within ChatGPT, including web search, Python, image analysis, file interpretation, and image generation. https://x.com/OpenAI/status/1912560057100955661

“Flex.2-preview is here with text to image, universal control (line, pose, depth), and inpainting all baked into one model. Fine tunable with AI-Toolkit, Apache 2.0 license, 8B parameters. Link in 🧵 https://x.com/ostrisai/status/1914799647899722198

[2504.12739v1] Mask Image Watermarking https://arxiv.org/abs/2504.12739v1

“Fuck it, starting today you can run inference across 30,000+ Flux and SDXL LoRAs on the Hugging Face Hub via Inference Providers (powered by @FAL ⚡) And.. it gets better, you can generate over 40+ images in less than A DOLLAR! Go try it now on your favourite LoRA on HF 🤗 https://x.com/reach_vb/status/1915830938438717777

“Nvidia just dropped Describe Anything on Hugging Face Detailed Localized Image and Video Captioning https://x.com/_akhaliq/status/1914917564137828622

“8. Seedream 3.0 @ByteDanceOSS Presents a bilingual (Chinese-English) image generation model with major improvements in visual fidelity, text rendering, and native high-resolution outputs up to 2K https://x.com/TheTuringPost/status/1914448564828430361

ChatGPT now lets users create fake images of politicians. We stress-tested it | CBC News https://www.cbc.ca/news/canada/chatgpt-fake-politicians-1.7507039

“imagegen is launched in the openai api! build cool stuff plz” / X https://x.com/sama/status/1915110344894435587

“I have always dreamt about a moment in time when I could take a picture or video of something I see in the street and creatively remix any part of it just by describing it. We are finally here. https://x.com/c_valenzuelab/status/1913761070725935178