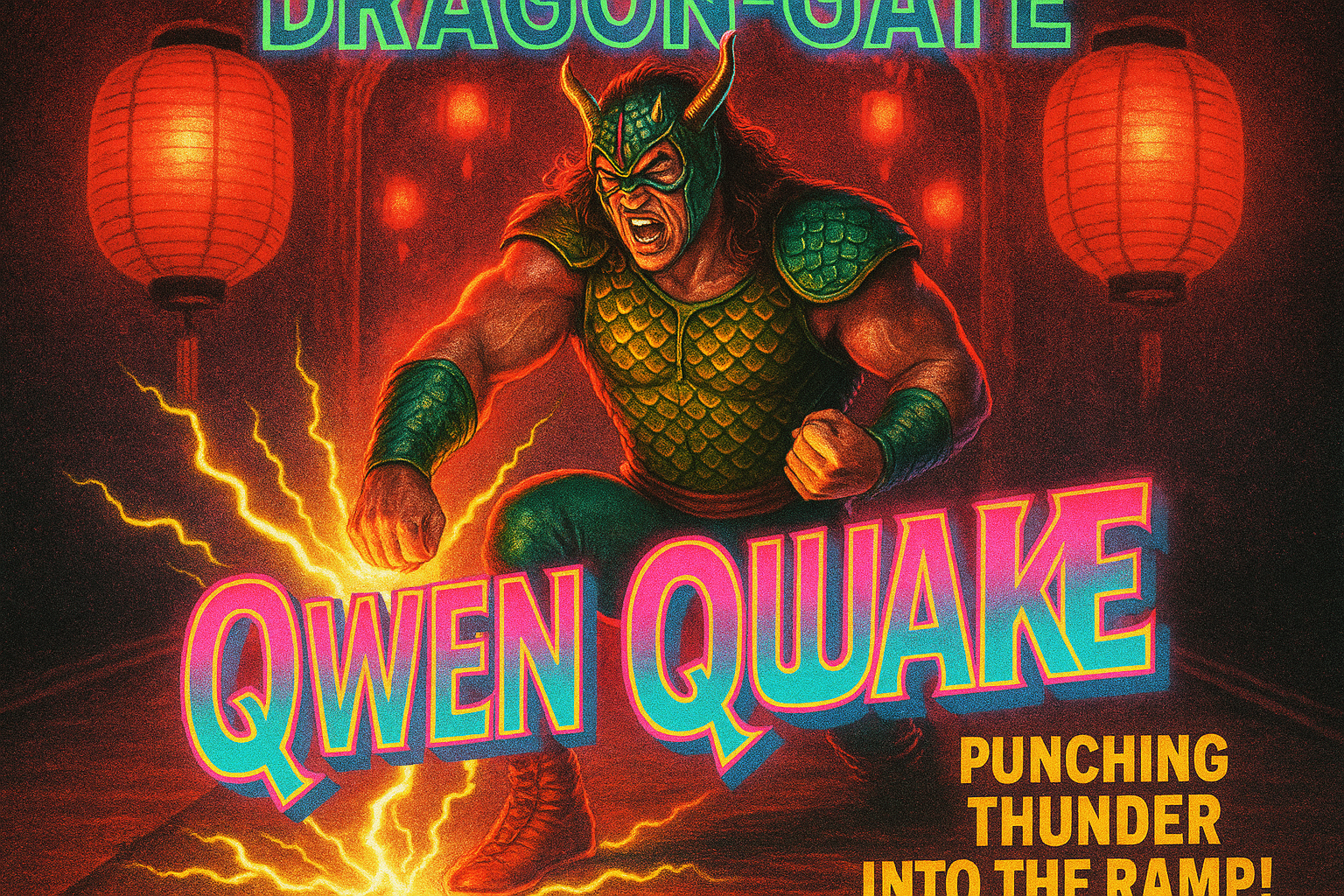

Image created with OpenAI GPT-Image-1. Image prompt: over-the-top 1990s pro-wrestling promo poster, dragon-gate entrance featuring “Qwen Quake” wearing scaled armor punching thunder into the ramp; red lantern glow, grainy print texture, vivid neon titles

🚀 Introducing Qwen3-MT – our most powerful translation model yet! Trained on trillions of multilingual tokens, it supports 92+ languages—covering 95%+ of the world’s population. 🌍✨ 🔑 Why Qwen3-MT? ✅ Top-tier translation quality ✅ Customizable: terminology control, domain https://x.com/Alibaba_Qwen/status/1948406830688018471

🚀 We’re excited to introduce Qwen3-235B-A22B-Thinking-2507 — our most advanced reasoning model yet! Over the past 3 months, we’ve significantly scaled and enhanced the thinking capability of Qwen3, achieving: ✅ Improved performance in logical reasoning, math, science & coding https://x.com/Alibaba_Qwen/status/1948688466386280706

Less than two weeks Kimi K2’s release, @Alibaba_Qwen’s new Qwen3-Coder surpasses it with half the size and double the context window. Despite a significant initial lead, open source models are catching up to closed source and seem to be reaching escape velocity. https://x.com/cline/status/1948072664075223319

Qwen COOKED – beats Kimi K2 and competitive to Claude Opus 4 at 25% total parameters 🤯 https://x.com/reach_vb/status/1947357343101960424

Qwen3-235B-A22B scored 41% on ARC-AGI-1 without thinking! That’s the same level as Gemini 2.5 Pro, Sonnet 4 or o3-low with thinking. But it might be trained on it, if not, then it’s insane”” / X https://x.com/scaling01/status/1947351789222711455

RT @itsPaulAi: Wait so Alibaba Qwen has just released ANOTHER model?? Qwen3-Coder is simply one of the best coding model we’ve ever seen.…”” / X https://x.com/ClementDelangue/status/1947775783067603188

RT @lmstudio: Qwen/Qwen3-Coder with tool calling is supported in LM Studio 0.3.20, out now. 480B parameters, 35B active. Requires about 25…”” / X https://x.com/huybery/status/1948327670493970534

The new Qwen3 update takes back the benchmark crown from Kimi 2. Some highlights of how Qwen3 235B-A22B differs from Kimi 2: – 4.25x smaller overall but has more layers (transformer blocks); 235B vs 1 trillion – 1.5x fewer active parameters (22B vs. 32B) – much fewer experts in https://x.com/rasbt/status/1947393814496190712

The updated Qwen3-235B-A22B is now the best non-reasoning models period. It beats Kimi-K2, Claude-4 Opus and DeepSeek V3 on multiple benchmarks like GPQA, AIME, ARC-AGI, LiveCodeBench or BFCLv3, just to name a few. https://x.com/scaling01/status/1947350866840748521

So to recap: – Yesterday, frontier closed model equivalent reasoning model from Qwen, – This morning, frontier closed model equivalent reasoning vision capabilities from stepfun – sometime today(?) a frontier video model from wan? All open source What is America doing?”” / X https://x.com/Teknium1/status/1948744914876920039

✅ Try out @Alibaba_Qwen 3 Coder on vLLM nightly with “”qwen3_coder”” tool call parser! Additionally, vLLM offers expert parallelism so you can run this model in flexible configurations where it fits. https://x.com/vllm_project/status/1947780382847603053

🚨 Model Update: Qwen3-coder is in the WebDev Arena! @Alibaba_Qwen have released their best coding model to date and it’s now live in WebDev Arena awaiting your hardest prompts for real world testing. Prompt: “”style a basic login form using Tailwind CSS with dark mode https://x.com/lmarena_ai/status/1948399802947084347

Another incredible OSS model release this summer: the new Qwen 3 update is now live on @togethercompute APi.”” / X https://x.com/vipulved/status/1947871449282216055

Bye Qwen3-235B-A22B, hello Qwen3-235B-A22B-2507! After talking with the community and thinking it through, we decided to stop using hybrid thinking mode. Instead, we’ll train Instruct and Thinking models separately so we can get the best quality possible. Today, we’re releasing https://x.com/Alibaba_Qwen/status/1947344511988076547

Did a benchmark with the new Qwen3 Reasoner 220B on Arena-hard v1 It scores an 89% winrate over gpt4-0314, 4o scores an 81% dont have numbers for o3/4o-mini etc but its basically saturated a near perfect win rate. nicee”” / X https://x.com/Teknium1/status/1948836009183224132

Open source models 📈 qwen3-coder is available in Cline”” / X https://x.com/cline/status/1948452627278430376

Please note, we’re not able to reproduce the 41.8% ARC-AGI-1 score claimed by the latest Qwen 3 release — neither on the public eval set nor on the semi-private set. The numbers we’re seeing are in line with other recent base models. In general, only rely on scores verified by”” / X https://x.com/fchollet/status/1947821353358483547

Qwen just released a 480B coding model & a space to try it out for web dev. Fun! Model: https://x.com/ClementDelangue/status/1947780025886855171

Qwen-MT: Where Speed Meets Smart Translation | Qwen https://qwenlm.github.io/blog/qwen-mt/

RT @Alibaba_Qwen: Performance of Qwen3-Coder-480B-A35B-Instruct on SWE-bench Verified! https://x.com/QuixiAI/status/1947773200953217326

RT @cline: Qwen3-Coder is now available in Cline 🧵 New 480B parameter model with 35B active parameters. > 256K context window > comparabl…”” / X https://x.com/Alibaba_Qwen/status/1947954292738105359

RT @GregKamradt: Anyone have a connection at @Alibaba_Qwen? Trying to reproduce the results on @arcprize and getting different metrics Wa…”” / X https://x.com/clefourrier/status/1947994251410682198

RT @OpenRouterAI: 🟣New: Qwen3-Coder by @Alibaba_Qwen – 480B params (35B active) – Native 256K context length, extrapolates to 1M – Outperf…”” / X https://x.com/huybery/status/1947808085504102487

RT @UnslothAI: @Alibaba_Qwen Congrats guys on another epic release! We’re uploading Dynamic GGUFs, and one with 1M context length so you gu…”” / X https://x.com/QuixiAI/status/1947773516368994320

RT @WolframRvnwlf: I’m now using Qwen3-Coder in Claude Code. Works with any model actually, but this is surely the best one currently. The…”” / X https://x.com/huybery/status/1948184493631959536

We’ve updated Qwen3 and made excellent progress. The non‑reasoning model now delivers significant improvements across a wide range of tasks and many of its capabilities already rival those of reasoning models. It’s truly remarkable, and we hope you enjoy it!”” / X https://x.com/huybery/status/1947345040470380614

Wow the new qwen reasoner at only 232B params is as good as the top closed frontier lab models Big day for OS”” / X https://x.com/Teknium1/status/1948711699013665275

NVIDIA’s Canary-Qwen-2.5B 1st place on the @HuggingFace leaderboard for automatic speech recognition – lowest word error rate (WER) ever recorded on the Hugging Face OpenASR leaderboard: 5.63%. – its the first speech model built on top of an existing LLM. – At its core, it https://x.com/rohanpaul_ai/status/1946823138932863210

AudioRAG is becoming real! Just built a demo with ColQwen-Omni that does semantic search on raw audio, no transcription needed. Drop in a podcast, ask your question, and it finds the exact chunks where it happens. You can also get a written answer. What’s exciting: it skips https://x.com/fdaudens/status/1946226098905169967

so many open LLMs and image LoRAs dropped past week, here’s some picks for you 🫡 LLMs > ByteDance released a bunch of translation models called Seed-X-RM (7B) > NVIDIA released reasoning models of which 32B surpassing the giant Qwen3-235B with cc-by-4.0 license 👏 > LG released https://x.com/mervenoyann/status/1948018642462933149

Looking at the HuggingFace configs, this is a wider/shallower model compared to Qwen3. – 62 layers vs 94 – dim 6144 vs 4096 – 160 experts vs 128 – 96 attn heads vs 64 Curious why the architectural change? Qwen3.5?”” / X https://x.com/nrehiew_/status/1947770826943549732

RT @SIGKITTEN: qwen3-coder, running locally I had it set up testing infra using minunit and gcov and write some tests on a small ~5000 lo…”” / X https://x.com/huybery/status/1948184517673644466

missed this, @NVIDIAAIDev silently dropped Open Reasoning Nemotron models (1.5-32B), SoTA on LiveCodeBench, CC-BY 4.0 licensed 🔥 > 32B competing with Qwen3 235B and DeepSeek R1 > Available across 1.5B, 7B, 14B and 32B size > Supports upto 64K output tokens > Utilises GenSelect https://x.com/reach_vb/status/1947331118983696907

RT @reach_vb: Lets GOOO! @NVIDIAAIDev just dropped Canary Qwen 2.5 – SoTA on Open ASR Leaderboard, CC-BY licensed 🔥 > Works in both ASR an…”” / X https://x.com/reach_vb/status/1946087224346313175

Now it’s possible to do RAG with any-to-any models 🔥 Learn how to search in a video dataset and generate using OmniEmbed, an all modality retriever, and Qwen2.5-Omni, any-to-any model in this notebook 🤝 https://x.com/mervenoyann/status/1947285360926494911

Incredible results! Open source is winning. https://x.com/AravSrinivas/status/1947810865685925906

This might be the best coding model yet. General-purpose is cool, but if you want the best at coding, specialization wins. No free lunch.”” / X https://x.com/rasbt/status/1947995162782638157

This is the dumbest open source models ever will be”” / X https://x.com/reach_vb/status/1947364340799283539